Server virtualization involves partitioning a physical server into multiple virtual machines, each capable of running independent operating systems and applications. This technology enables more efficient use of hardware resources, allowing multiple workloads to coexist on a single physical server. It significantly reduces energy consumption and ultimately contributes to a more sustainable and eco-conscious approach to technology. This blog post explores how server virtualization has taken center stage in the green IT revolution.

Server virtualization involves partitioning a physical server into multiple virtual machines, each capable of running independent operating systems and applications. This technology enables more efficient use of hardware resources, allowing multiple workloads to coexist on a single physical server. It significantly reduces energy consumption and ultimately contributes to a more sustainable and eco-conscious approach to technology. This blog post explores how server virtualization has taken center stage in the green IT revolution.

Areas of Green IT

Green IT, or Green Information Technology, is a philosophy that emphasizes the responsible use of technology to minimize its environmental impact. By adopting practices that prioritize energy efficiency, resource conservation, and waste reduction, businesses can play a pivotal role in reducing their ecological footprint. This aligns with global sustainability goals and leads to cost savings and operational efficiency improvements. Energy efficiency in IT infrastructure is crucial. It involves optimizing the consumption of electricity and resources to minimize waste. This is achievable through technologies like virtualization, which allows for consolidating multiple virtual machines onto a single physical server, significantly reducing the overall energy consumption. Moreover, resource conservation involves efficiently utilizing hardware and software to extend their lifespan, minimizing the need for constant upgrades and replacements. Lastly, waste reduction focuses on responsible disposal and recycling practices to minimize electronic waste, creating a cleaner environment.Significance of Reducing Carbon Emissions in Green IT

Reducing carbon emissions is a pivotal goal in Green IT. The IT sector accounts for significant global carbon emissions, and adopting sustainable practices can lead to substantial reductions. The World Economic Forum’s Global Risks Report consistently lists environmental risks, including carbon overload, among the top global threats. These risks can lead to economic instability, impacting industries, supply chains, and infrastructure. Organizations can make substantial strides towards a greener and more environmentally conscious IT infrastructure by minimizing energy consumption and employing efficient technologies like server virtualization.Why It’s Good to Invest in Server Virtualization?

Server virtualization offers many benefits, with cost savings and efficiency leading the way.Cost Savings

Server virtualization is a game-changer when it comes to cost savings. The economic cost of natural disasters related to climate change and carbon overload is substantial. In 2020 alone, these costs reached approximately $268 billion globally. Businesses can significantly reduce their hardware expenses by consolidating multiple virtual machines onto a single physical server. This includes not only the cost of purchasing new servers but also the expenses associated with maintenance, cooling, and physical space requirements.Energy Savings

Traditional server setups often operate at a fraction of their capacity, leading to inefficient resource allocation and high energy consumption. Server virtualization addresses this issue by enabling businesses to utilize their hardware to its full potential. Virtual machines can dynamically allocate resources based on demand, ensuring optimal performance and reducing waste. A U.S. Environmental Protection Agency (EPA) report found that server virtualization can lead to energy savings of up to 80%. By adopting server virtualization, businesses can reap the benefits of reduced energy consumption, resulting in lower electricity bills and a lighter environmental impact. The reduced hardware footprint also leads to lower cooling costs, further contributing to overall cost savings.Optimized Resource Allocation

In traditional server setups, it’s common for individual servers to operate at a fraction of their capacity. This inefficiency results in wasted resources and increased energy consumption. Server virtualization addresses this issue by allowing businesses to make the most out of their existing hardware. Virtualization technology enables dynamic resource allocation, meaning that each virtual machine receives precisely the resources it needs to operate efficiently. This eliminates the inefficiencies associated with static resource allocation in traditional setups. Imagine a scenario where every computer in your office adapts its performance to the task at hand. That’s the power of virtualization.Flexibility and Scalability

Businesses today operate in a dynamic environment. Needs change, and they change fast. Server virtualization provides the agility to adapt quickly to these changes without needing constant hardware upgrades. With virtualization, adding or expanding new applications is as simple as creating a new virtual machine. Investing in additional physical servers is unnecessary, saving both time and money. This flexibility ensures that businesses can respond promptly to evolving demands, staying competitive in today’s fast-paced market. Whether scaling up to meet increased workloads or scaling down during slower periods, virtualization provides the flexibility to adjust resources on the fly. This means businesses can operate efficiently and confidently, knowing their IT infrastructure can meet their changing needs.How Does Server Virtualization Help to Reduce CO2 Emissions?

Traditional server setups are known for their energy-hungry nature. They involve numerous physical servers, each with its own power requirements and cooling needs. This leads to a significant carbon footprint, as the energy demand for these servers directly contributes to CO2 emissions. A study by the Green Electronics Council paints a compelling picture: firms implementing server virtualization technologies reduced their CO2 emissions by an impressive average of 63% compared to those relying solely on physical servers. Server virtualization does wonders in cutting down energy consumption and CO2 emissions. By allowing multiple virtual machines to operate on a single physical server, the need for multiple servers diminishes. This consolidation leads to a proportional drop in energy usage and CO2 emissions. Moreover, virtualization ensures the smart use of resources. Each virtual machine gets precisely what it needs, precisely when it needs it. This means no more overloading of resources, which is a common inefficiency in traditional server setups. Virtualization platforms also come equipped with power management features. These features dynamically adjust the power consumption of servers based on workload demands. This responsive approach further minimizes energy usage and, in turn, CO2 emissions.Security and Server Virtualization

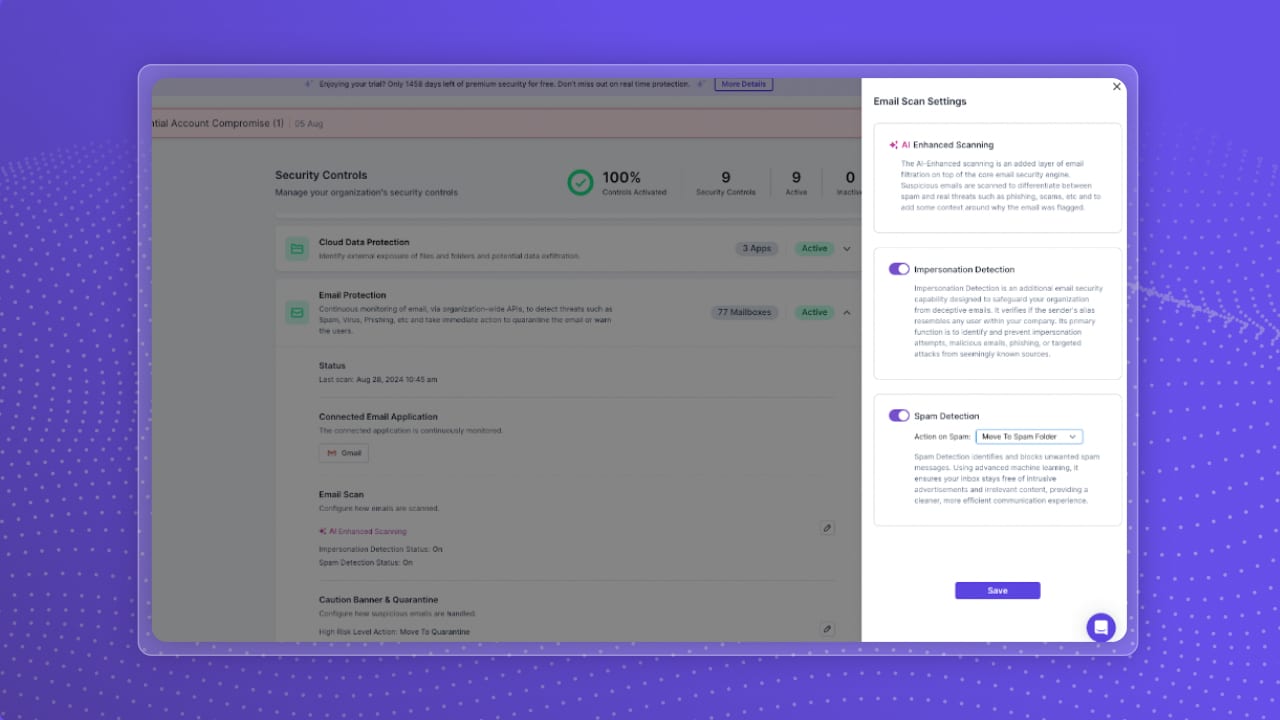

Managing security in traditional server setups can be complex and daunting. With multiple physical servers, each requiring individual attention, it’s easy for security gaps to emerge. This complexity can lead to vulnerabilities that malicious actors might exploit. Server virtualization simplifies this process. Businesses can centralize their security measures by consolidating multiple virtual machines onto a single physical server. This means fewer points of entry for potential threats, making monitoring and protecting sensitive data easier. Virtualization platforms come equipped with advanced security features that provide additional protection. These features include secure hypervisors, network segmentation, and secure boot processes, all working together to safeguard critical business data. Virtualization is a powerful tool in fortifying your business against cyber threats. It’s like having a digital security guard who’s always on duty, ensuring your sensitive information stays safe and secure.Overcoming Challenges in Implementing Server Virtualization

Implementing server virtualization might seem like a big step, and it’s natural to encounter some initial challenges. One common hurdle is the need for staff training. Getting your team up to speed on virtualization technologies may take a bit of time, but the benefits, in the long run, make it well worth the investment. Another consideration is the initial setup cost. While virtualization can lead to significant cost savings over time, acquiring the necessary hardware and software may be an initial investment. However, it’s important to remember that this investment pays off through reduced operational costs and improved efficiency.Best practices for success

To ensure a successful transition to server virtualization, it’s important to follow some best practices. Learning from the experiences of successful implementations can provide valuable insights. For example, conducting a thorough assessment of your existing IT infrastructure will help plan the virtualization process. This includes evaluating your current hardware, software, and applications to determine compatibility with virtualization technologies. Additionally, considering factors like workload distribution and redundancy planning is crucial for a smooth transition. Implementing a phased approach and conducting thorough testing can help identify and address any potential issues before full-scale implementation.Protecting Your Virtualized Environment

Even with the superhero-like capabilities of server virtualization, don’t forget about data protection! Virtual environments are susceptible to data loss from accidents, hardware failures, or even cyberattacks.

Storware Backup and Recovery offers a comprehensive solution specifically designed to safeguard your virtualized data centers. It provides features like:

- Easy Backups and Recovery: Streamlined processes to ensure your virtual machines are always protected.

- Flexibility: Supports various virtual environments and offers granular recovery options.

- Advance Security Measures: Linux-based installation, RBAC, Air-gap Backup, Retention Lock and more, keeping your data safe and secure.

By implementing Storware Backup and Recovery alongside server virtualization, you’ll have a winning combination for a sustainable, secure, and efficient IT infrastructure.

Paving the Way for Greener IT

Server virtualization is not just a technological advancement; it’s a critical step toward a more sustainable future in IT. By adopting these practices, businesses can save costs, reduce their environmental impact, and enhance their overall operational efficiency. Incorporating virtualization into your IT infrastructure isn’t just a smart business move; it’s also a responsible environmental choice. The benefits extend beyond the bottom line, contributing to a healthier planet for all. Consider taking the first step towards a greener IT future. Explore the possibilities of server virtualization and discover how it can revolutionize your business operations while positively impacting the environment.About Version 2 Digital

Version 2 Digital is one of the most dynamic IT companies in Asia. The company distributes a wide range of IT products across various areas including cyber security, cloud, data protection, end points, infrastructures, system monitoring, storage, networking, business productivity and communication products.

Through an extensive network of channels, point of sales, resellers, and partnership companies, Version 2 offers quality products and services which are highly acclaimed in the market. Its customers cover a wide spectrum which include Global 1000 enterprises, regional listed companies, different vertical industries, public utilities, Government, a vast number of successful SMEs, and consumers in various Asian cities.

About Storware

Storware is a backup software producer with over 10 years of experience in the backup world. Storware Backup and Recovery is an enterprise-grade, agent-less solution that caters to various data environments. It supports virtual machines, containers, storage providers, Microsoft 365, and applications running on-premises or in the cloud. Thanks to its small footprint, seamless integration into your existing IT infrastructure, storage, or enterprise backup providers is effortless.