When compared to other industries, healthcare has remained quite reluctant to digitalization. However, as technology evolves, cloud computing has become vital in streamlining operations and enhancing data accessibility. On the flip side, this also introduces various security concerns that demand attention.

This comprehensive guide delves into the importance of robust cloud security in healthcare. It provides valuable insights to safeguard sensitive patient information, maintain regulatory compliance, and fortify the industry against evolving threats. Join us as we explore all the essential information regarding cloud security in healthcare.

The growing importance of cloud security in healthcare

After the COVID-19 pandemic, the healthcare industry experienced a heightened demand for improved and more modern services. Distributed care and telemedicine pushed healthcare organizations to move to cloud computing, meaning data security had to be considered. The problem is that the same techniques that were valid for data security on-premises don’t translate well into externally kept data.

Some of the challenges facing the healthcare industry transitioning to cloud infrastructure included:

Resource and budget strains. Most healthcare providers work with limited IT budgets, so major infrastructure overhauls are long and tedious.

Continuity of operations. Data migrations to the cloud shouldn’t disrupt everyday operations, which isn’t something that all healthcare providers can allow.

Regulatory compliance. Patient data is highly confidential information so various local regulations sanction its security.

Generally, healthcare organizations want to move to cloud computing to make their services more effective while avoiding unnecessary or unmanaged risks. As patient data is one of the most sensitive data types, ensuring robust security measures is a top priority.

Types of healthcare cloud security solutions

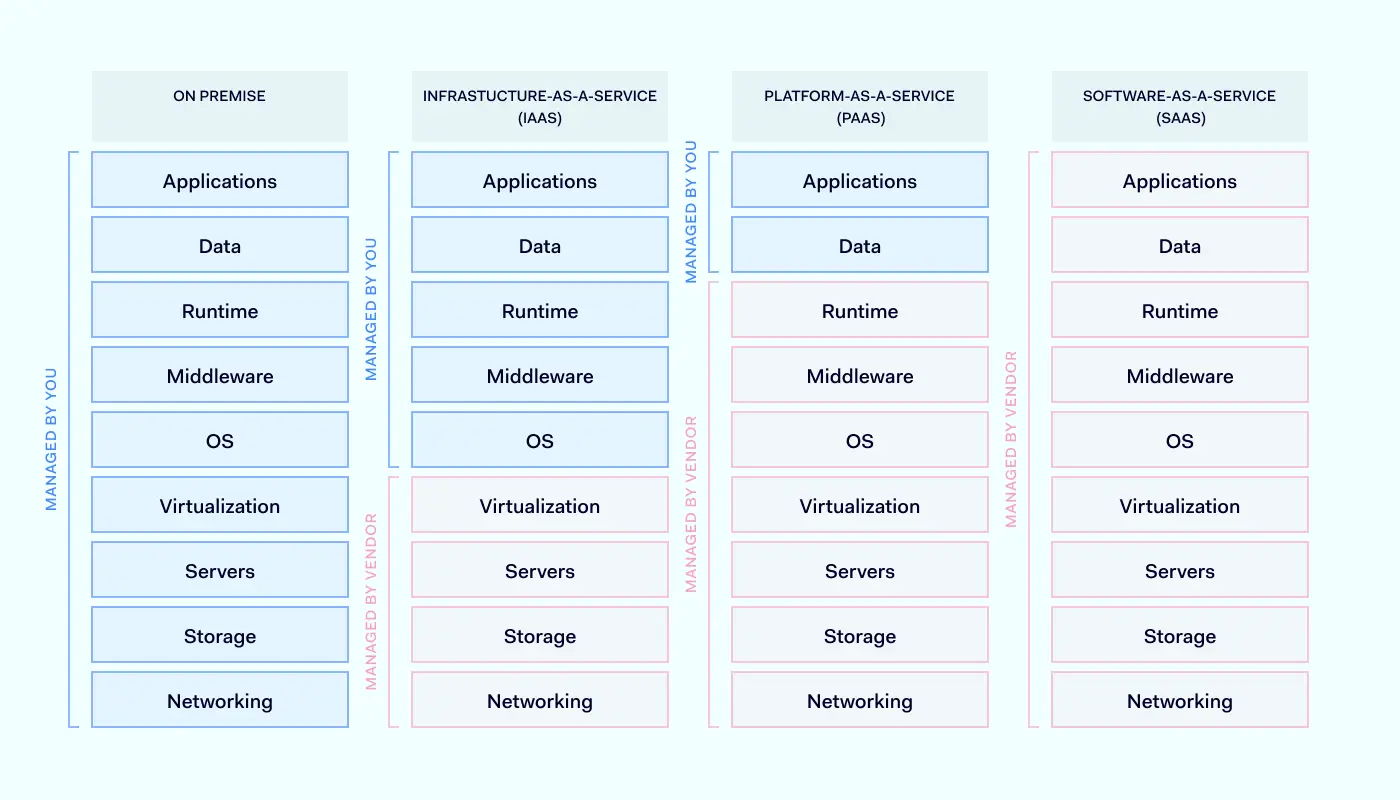

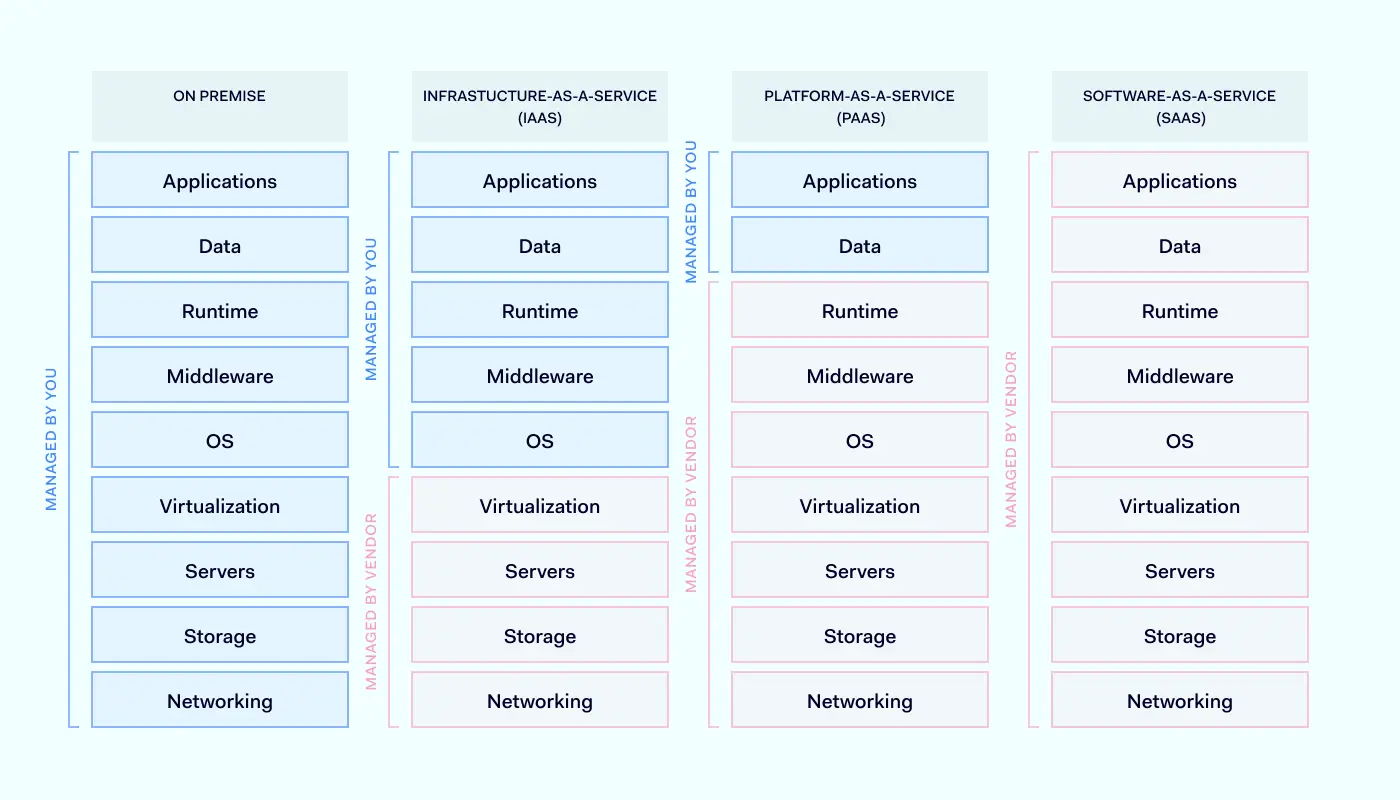

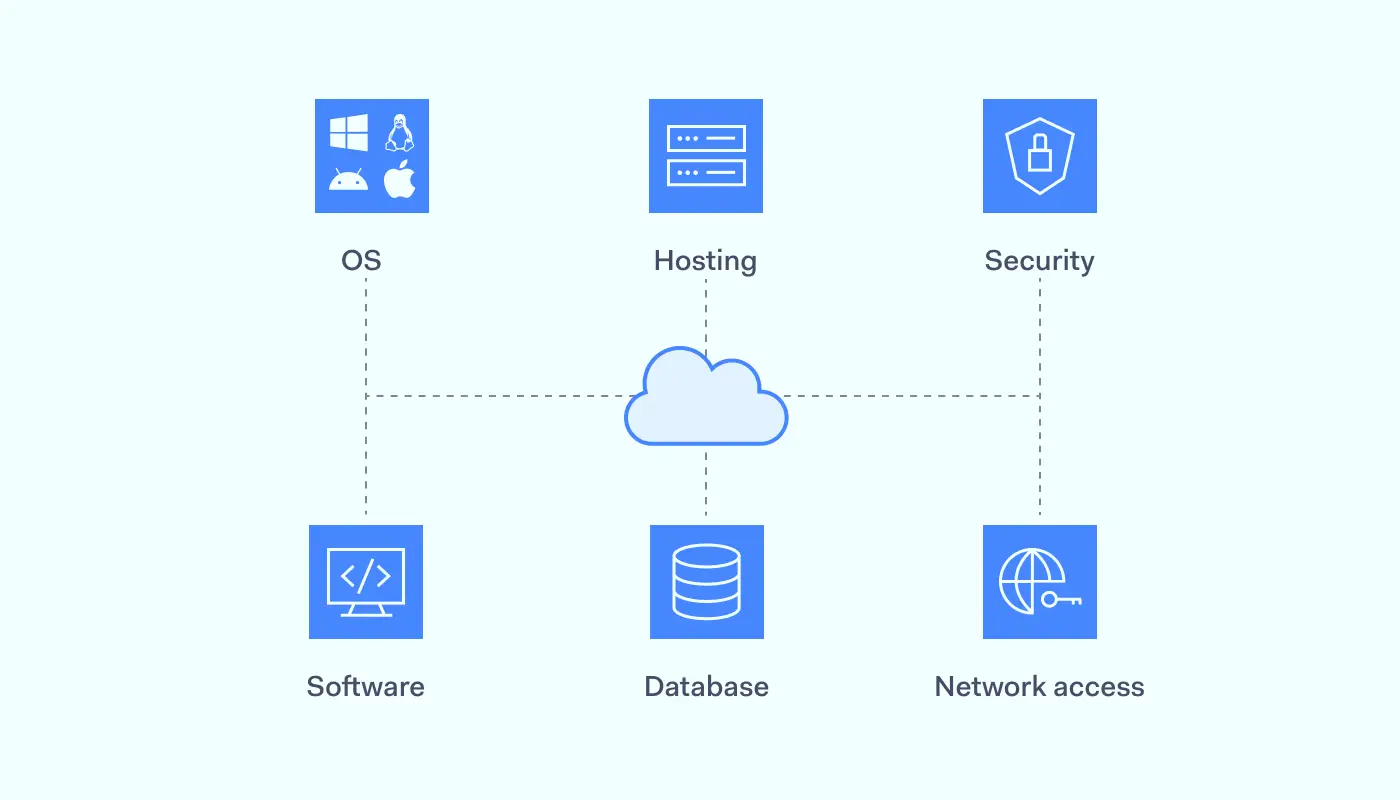

Healthcare providers (and, by extension, most industries) rely on three main types of cloud computing services. This includes Infrastructure-as-a-Service (IaaS), Platform-as-a-Service (PaaS), and Software-as-a-Service (SaaS).

Infrastructure-as-a-Service (IaaS)

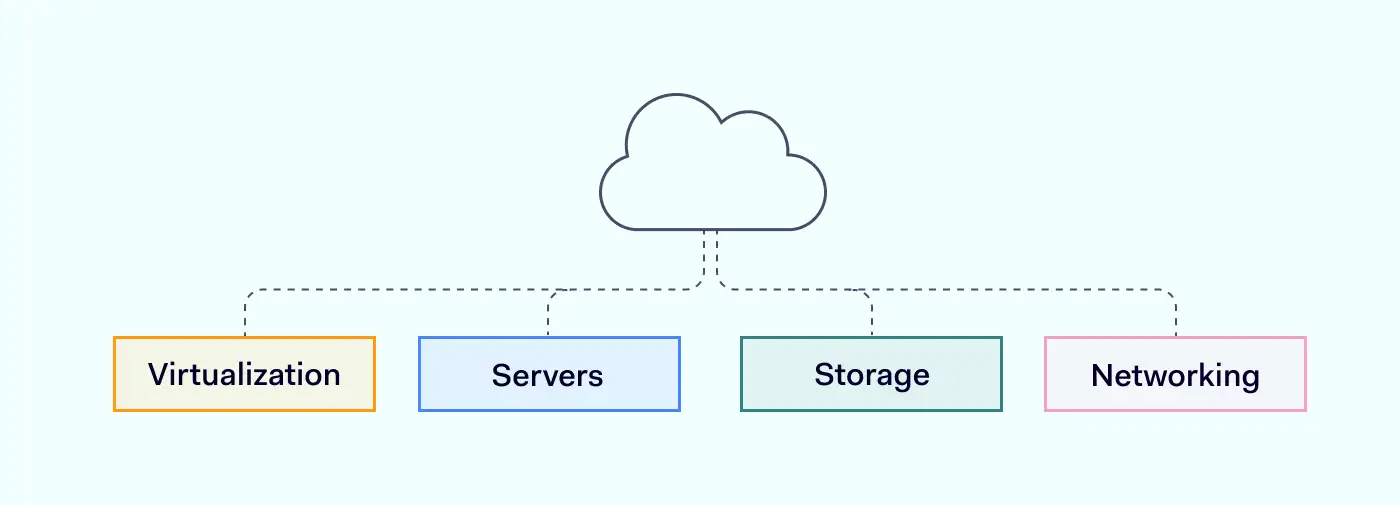

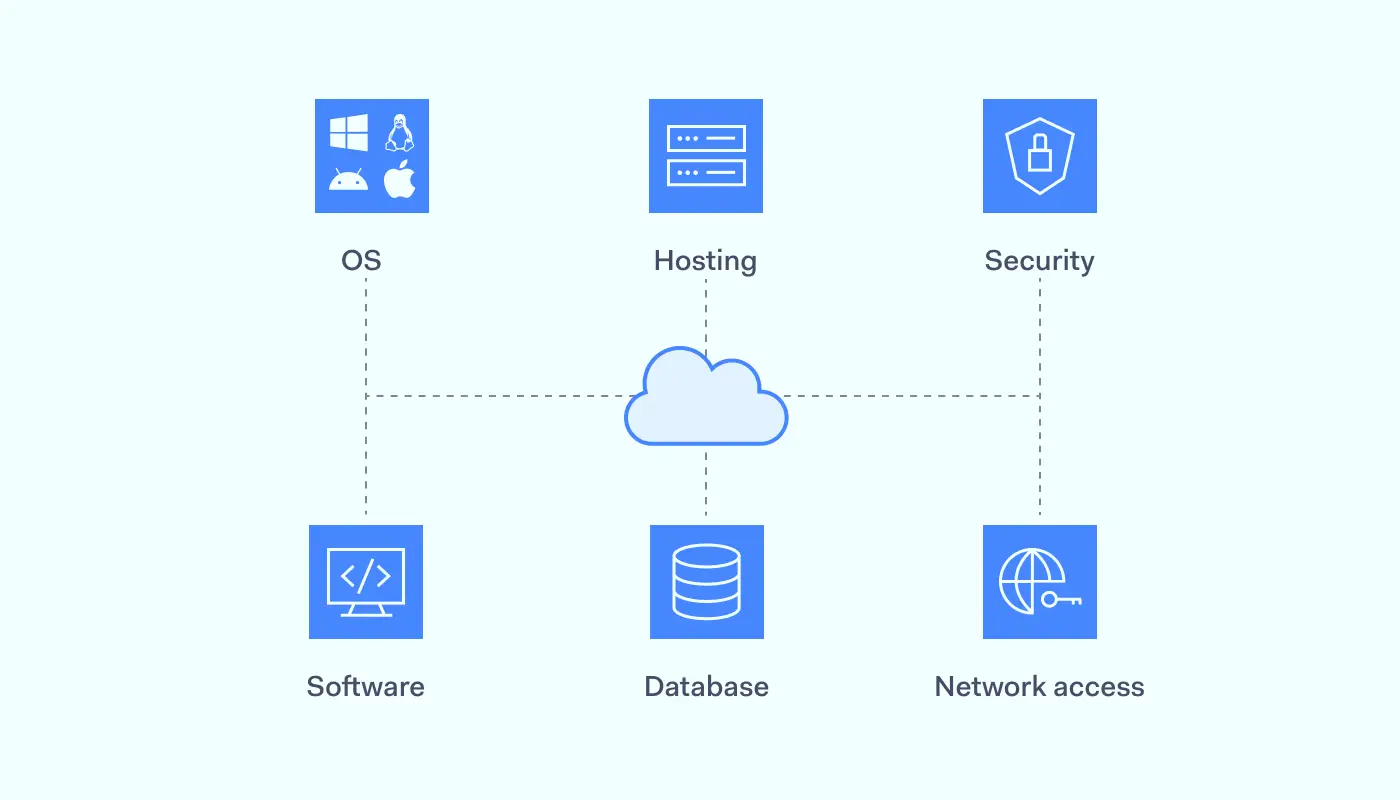

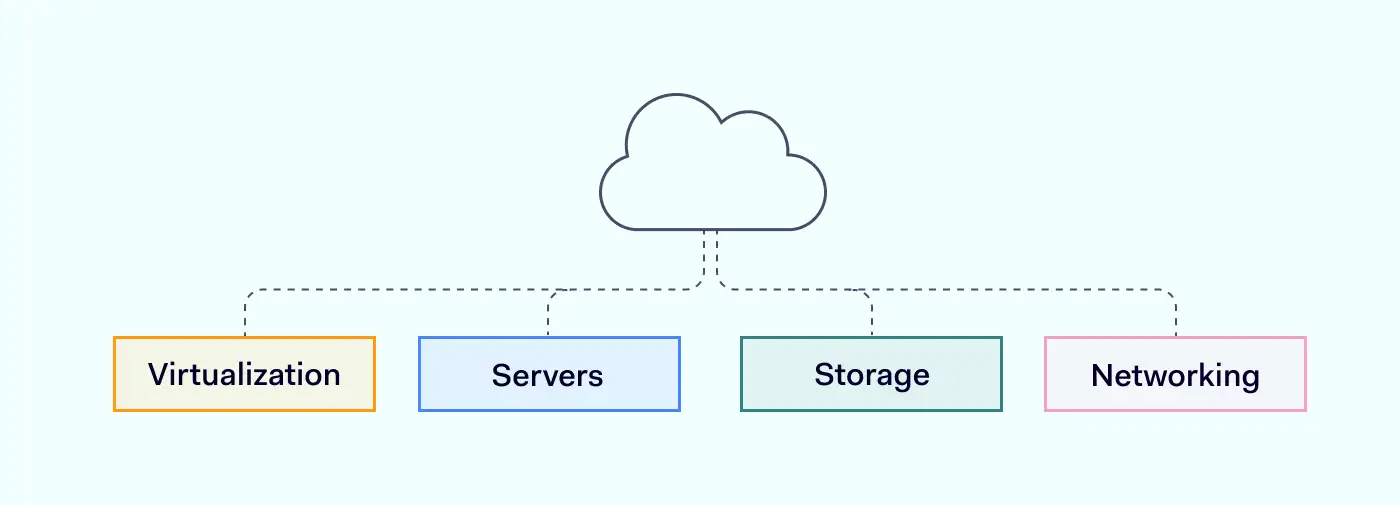

Infrastructure-as-a-Service provides virtualized computing resources as services over the internet. In IaaS, the service provider manages and delivers all associated hardware and software components: servers, storage, networking, and virtualization resources. With IaaS, users can provision and control these resources on-demand, scaling them up or down as needed.

Benefits of IaaS in healthcare

Aside from the fact that cloud computing makes it easier to deploy workloads, IaaS has a range of benefits that could be useful for healthcare companies.

Scalability and flexibility. By leveraging IaaS, users can rapidly deploy and configure virtual machines, storage, and network components. This allows healthcare organizations to scale their infrastructure up or down based on their actual needs.

Disaster recovery. IaaS enables organizations to back up and recover their critical data and remote machines. As critical data and applications are kept in cloud storage, this ensures their availability and integrity.

Cost efficiency. IaaS service providers use a flexible pay-as-you-go pricing model allowing users to pay only for the resources they use. This enables cost optimization, eliminating the need for upfront hardware and infrastructure maintenance investments.

Security challenges and how to address them

IaaS security is shared between the service provider and the user. While the service provider is responsible for managing underlying networking, storage, servers, and virtualization, the user is responsible for managing the security of everything running on top of the infrastructure. This involves operating systems, middleware, data, and applications. This setup is not without cybersecurity challenges.

Data protection. Sensitive patient data must be protected using encryption and access controls. As the data is physically located in third-party data centers, unauthorized access or breaches are the primary concern.

Compliance. Patient data falls under government-protected information, so regulatory compliance applies to it. Organizations must ensure that their IaaS providers adhere to sensitive patient data from unauthorized access or breaches.

For these reasons, IaaS provider selection is crucial to avoid collateral damage. Implementing multi-factor authentication, regular vulnerability assessments, and proactive monitoring can enhance security.

Platform-as-a-Service (PaaS)

Platform-as-a-Service includes everything from IaaS with a dedicated environment for developing, deploying, and managing applications over the internet. It offers tools, frameworks, and services that enable developers to build, test, and run applications. Much like a physical data centre, PaaS providers handle hardware provisioning, operating system management, and network setup, allowing developers to focus solely on application development.

Benefits of PaaS in healthcare

With PaaS, healthcare providers get a platform for developing, testing and deploying applications in the cloud. Here are its main benefits:

Rapid application development. PaaS simplifies the application development process, allowing one to skip multiple setup steps and go directly to the deployment. This can accelerate innovation and provide new solutions quickly.

Scalability and performance. As with all cloud-based tools, they can automatically scale based on demand, ensuring high availability and optimal performance.

Collaboration and integration. PaaS is compatible with existing systems, meaning currently used tools can be integrated into a unified system.

Security challenges and how to address them

When adopting PaaS, organizations need to be wary of its security challenges. Here are some examples:

Application security. PaaS environments involve the deployment and running of custom applications. Therefore, businesses should conduct regular code reviews, implement secure coding practices, and perform vulnerability assessments.

Secure configuration. Businesses need to make sure that used PaaS platforms are properly configured. This includes firewalls, network access controls, and encryption protocols.

Incident response and monitoring. PaaS environments require ongoing monitoring and timely incident response capabilities. By establishing robust logging and monitoring systems and employing detection and prevention mechanisms, we can have a ready system in case of an intrusion.

Software-as-a-Service (SaaS)

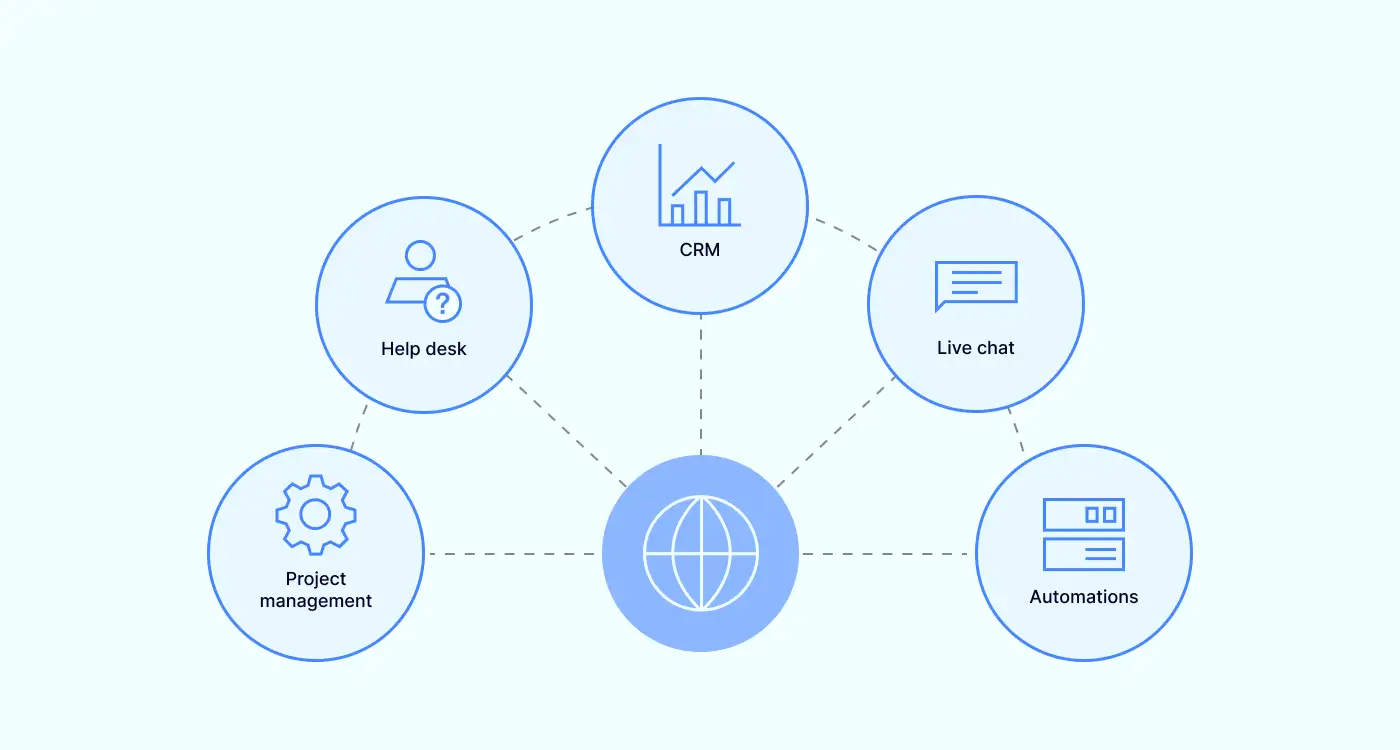

Software-as-a-Service is a cloud computing model in which hosted software is delivered over the internet instead of installed on local premises. In this model, the software is centrally hosted by a provider who manages and maintains the underlying infrastructure, database, and updates. Users only pay a subscription fee to access and use the software on a pay-as-you-go basis.

Many healthcare-related applications are delivered via SaaS, including healthcare picture archiving and communication systems (PACs), electronic health records (EHR), telehealth services, and more.

Benefits of SaaS in healthcare

With SaaS, healthcare organizations are provided with the service directly without the need to handle setup and maintenance. Here are its main benefits:

Accessibility and mobility. SaaS applications can be accessed from everywhere, enabling healthcare professionals to securely access patient information on various devices, enhancing workflow efficiency.

Automatic updates. The responsibility to handle software updates and patches fall on the service provider, meaning that healthcare applications are always up to date and protected against emerging security threats.

Fast deployment. SaaS applications are provided instantly and with minimal setup compared to on-premises software. Software updates and maintenance are handled by the SaaS provider, ensuring smooth operation.

Security challenges and how to address them

The problem is that SaaS brings healthcare organizations not only benefits. It does have some security challenges that need to be addressed by IT personnel.

Access control. As SaaS applications are externally hosted, managing user access and authentication is critical. This is the only way to prevent unauthorized intrusions.

Third-party integrations. Some SaaS applications need to be integrated with third-party services or APIs. These integrations can introduce security risks if not properly managed or if they have exploitable vulnerabilities.

Multi-tenancy risks. The same SaaS application can serve multiple consumers, sharing the same underlying structure and resources. This is why logical separation and isolation between tenants are crucial to prevent data leakage or unauthorized access to customer data.

Compliance and regulatory landscape in cloud security

Regulatory landscape and compliance are critical considerations for organizations across various industries. Most countries have recently implemented various data protection and cybersecurity laws. The government regulates the privacy protection of medical data, and breaching the law ensues grave consequences.

Here are some prominent regulations, guidelines that could impact cloud security, and strategies for ensuring compliance.

HIPAA and HITECH

The Health Insurance Portability and Accountability Act (HIPAA) and the Health Information Technology for Economic and Clinical Health (HITECH) Act are crucial regulations in the healthcare industry. They both perform different functions:

HIPAA — sets standards for protecting sensitive patient health information

HITECH — promotes the adoption of electronic health records

Compliance with both is essential when leveraging cloud computing services in the healthcare sector. Organizations need to take care of security measures like data encryption, access controls, and regular audits to safeguard patient data and meet the requirements outlined in these regulations.

GDPR

The General Data Protection Regulation (GDPR) is a comprehensive data protection framework that affects organizations operating in European Union countries or handling EU citizen data. It emphasizes individual privacy rights, consent management, and data breach notification.

Cloud service providers and organizations utilizing cloud computing services must comply with GDPR by implementing appropriate security measures, conducting data protection impact assessments, and ensuring cross-border data transfers adhere to GDPR guidelines. Encryption, pseudonymization, and privacy-by-design principles are critical for achieving compliance with GDPR in cloud computing.

Other regional regulations and guidelines

In addition to HIPAA, HITECH, and GDPR, other regional regulations and guidelines impact cloud security in specific industries or geographic locations. Examples include the Payment Card Industry Data Security Standard (PCI DSS) for the payment card industry and the Federal Risk and Authorization Management Program (FedRAMP) for U.S. government agencies.

Compliance with these regulations requires organizations to align their cloud security practices with specific requirements. Depending on the regulation and area, this may include data encryption, access controls, vulnerability management, and incident response protocols. Staying informed about relevant regional regulations is crucial to ensure compliance and avoid potential penalties or reputational damage.

Navigating third-party challenges and shared responsibility

As it was mentioned previously, cloud services adoption would involve collaboration with third parties. Here are some key considerations of security responsibilities between the cloud service provider and the customer:

Vendor risk assessment

A thorough vendor risk assessment helps to make sure that a cloud provider will be a matching fit for a healthcare organization’s needs. The cloud service provider’s market is saturated, but not everyone has compliant security controls, certifications, incident response capabilities, and data protection practices. The same strict requirements for healthcare organizations also apply to their third-party partners.

By assessing vendor risks, organizations can make informed decisions and select providers aligning with their security requirements and compliance obligations. Provider’s failure to secure the underlying infrastructure can open the gap in the security set up by the healthcare provider.

Understanding the shared responsibility model

The shared responsibility model defines the division of security responsibilities between cloud service providers and customers. While providers are responsible for securing the underlying infrastructure, customers are accountable for securing their data and applications within the cloud.

Organizations must understand and fulfill their share of responsibilities, which may involve tasks such as configuring access controls, encrypting sensitive data, applying patches and updates, and regularly monitoring for security incidents.

Key cloud security strategies and solutions for healthcare

While cloud computing is appealing to make operations more modern and effective, the downside is the potential cybersecurity risks. Safeguarding sensitive patient data and navigating regulatory compliance requirements are the primary concerns for healthcare providers. There are three main cloud security strategies and solutions to consider.

Advanced threat prevention

Advanced threat prevention is one of the key cloud security strategies for healthcare. It involves deploying sophisticated security measures to identify and mitigate potential threats before they cause any damage. Relying on technologies like machine learning algorithms, behavior analysis, Deep Packet Inspection, and real-time monitoring, organizations aim to detect and respond to suspicious activities.

As a proactive approach to cybersecurity, advanced threat monitoring allows healthcare organizations to identify and effectively neutralize threats. This helps businesses to reduce the risk of data breaches and unauthorized access to patient information.

Cloud-based security operations and monitoring

Monitoring is critical in ensuring the integrity and confidentiality of healthcare data stored in the cloud. By providing continuous oversight and proactive detection of potential security breaches or unauthorized access attempts, monitoring enables organizations to secure against security incidents promptly.

In addition, by leveraging cloud-based security tools, healthcare organizations can centralize security operations, streamline incident response, and gain insights into potential vulnerabilities. The systems can be automated, helping organizations detect and mitigate security breaches on time and enhancing overall security posture without human involvement.

Secure remote work

During the COVID-19 pandemic, the adoption of remote work in the healthcare sector accelerated. Secure remote access became critical as healthcare professionals needed to access patient data and collaborate remotely.

Cloud security solutions enable secure sensitive data storage, ensuring healthcare providers can work efficiently while adhering to strict security protocols. Implementing secure virtual private networks (VPNs), multi-factor authentication, and encryption technologies safeguard data transmission and prevent unauthorized access, mitigating risks associated with remote work.

Cloud security in action: enabling new healthcare capabilities

Cloud security not only performs the function of safeguarding patient data, it also empowers healthcare organizations to embrace new capabilities and innovate. Here are some routes in which cloud security can facilitate advancements.

Redundancies to prevent ransomware attacks

Ransomware attacks use malware that encrypts data stored in the device’s hard drive rendering it inaccessible until a payment is made to the attacker. This is extremely disruptive to organizations relying on on-premises infrastructure as this can completely shut down all operations and compromise patient data.

The only solution to this issue is data replication in multiple dispersed locations. That way, there’s no centralized storage that could be tampered with. In an accident, data can be restored from unaffected backups, minimizing downtime and ensuring continuity of care. Cloud servers enable effective mirroring solutions allowing distributed backups.

Delegation of security responsibilities to third-party firms

Cloud security can catalyze operations outsourcing, allowing better work distribution in your organization. Managing and maintaining robust cloud security infrastructure requires specialized expertise. That’s one of the key reasons why many healthcare organizations delegate their security responsibilities to reputable third-party vendors.

Cloud computing partners already possess the knowledge and resources to implement industry best practices, conduct regular security assessments, and respond to emerging threats promptly. This allows organizations to enhance the cloud security posture and focus on quality patient care.

Automation to free up healthcare resources

Cloud security can be improved by adopting various innovations to improve the setup. By automating vulnerability scanning, log analysis, and security policy enforcement, healthcare providers can free up their workforce from manual and time-consuming tasks.

Automation improves efficiency, reduces the risk of human error, and ensures consistent application of security controls. As IT professionals aren’t burdened with recurring manual tasks. This leaves them more time to focus on advanced security measures and stay updated with evolving threats.

Expert insights and resources for healthcare cloud security

Several organizations provide expert insights and resources for healthcare cloud security. Cloud Security Alliance (CSA), the European Union Agency for Cybersecurity (ENISA), and the National Institute of Standards and Technology (NIST) are the main ones providing various recommendations for cloud security in healthcare companies.

CSA

CSA has established requirements for healthcare organizations to ensure secure cloud computing practices. These requirements mainly focus on several key areas:

Implement strong access controls and authentication mechanisms to protect sensitive data.

Regularly monitor and audit cloud services for security vulnerabilities and incidents.

Encrypt data both in transit and at rest to maintain confidentiality.

Conduct regular risk assessments and threat modelling to identify and mitigate potential risks.

Establish incident response and recovery plans to handle security breaches effectively.

Stay updated with the latest security best practices and standards.

By adhering to these CSA requirements, healthcare organizations can enhance the security of their cloud computing environments and protect patient information from unauthorized access or data breaches.

ENISA

ENISA lays out comprehensive requirements for healthcare organizations in the European Union to enhance their cybersecurity measures. These requirements encompass multiple aspects of cloud security:

Develop and enforce robust security policies and procedures for cloud adoption.

Perform thorough risk assessments to identify and address potential security threats.

Ensure the secure configuration and hardening of cloud computing environments.

Employ strong access controls and authentication mechanisms to protect sensitive data.

Regularly monitor and log cloud computing activities to detect any suspicious behaviour.

Establish incident response plans and conduct regular security audits.

Adherence to these ENISA requirements is vital to safeguarding patient data, protecting critical healthcare systems, and maintaining the resilience and trustworthiness of healthcare services within the EU.

NIST

NIST provides guidelines and requirements and guidelines for healthcare organizations to ensure the security and privacy of patient information. These requirements include:

Follow the NIST Cybersecurity Framework for risk management and cybersecurity best practices.

Employ strong identity and access management controls to protect data and resources.

Use encryption to safeguard data both in transit and at rest.

Regularly update and patch cloud infrastructure and applications to address security vulnerabilities.

Implement robust network security controls, such as firewalls and intrusion detection/prevention systems.

Conduct continuous monitoring and log analysis to promptly detect and respond to security incidents.

Healthcare companies must review and adapt these recommendations to their organizational needs and regulatory requirements.

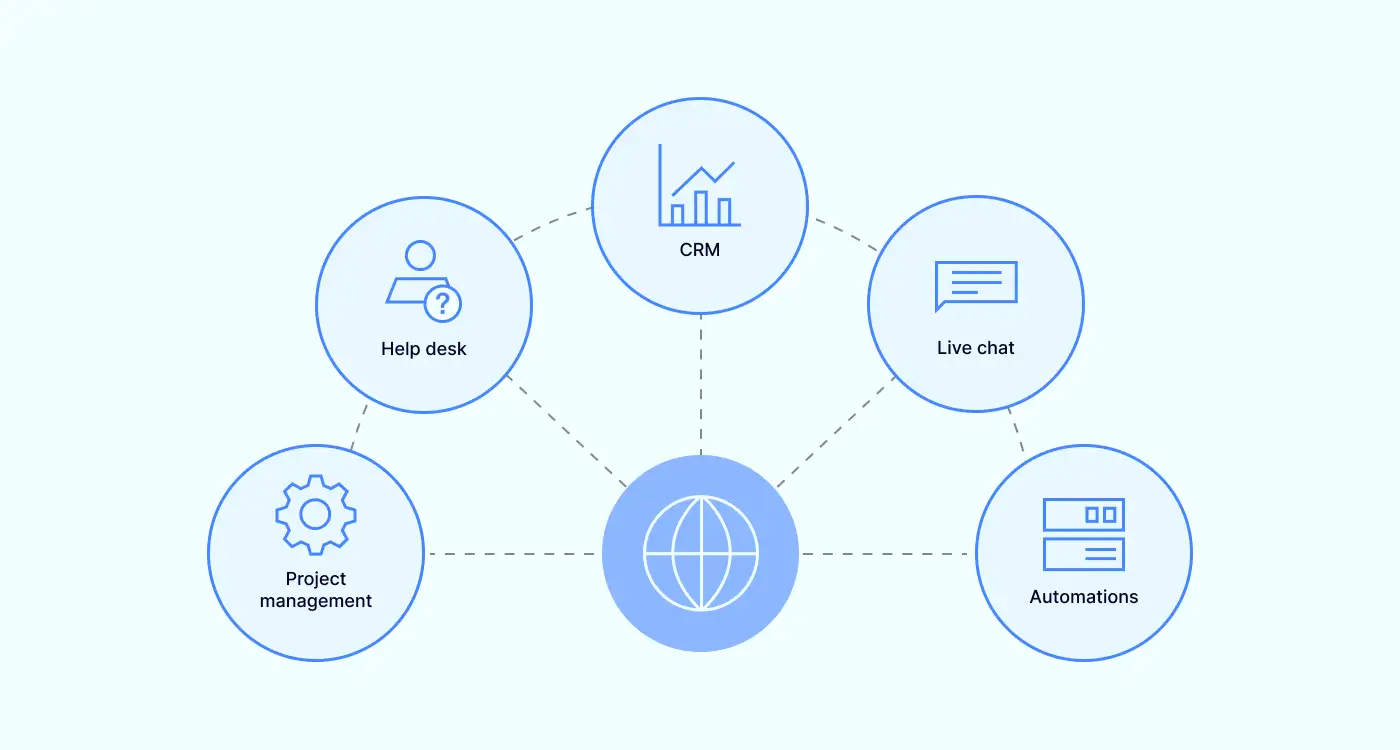

How can NordLayer help?

Securing cloud infrastructure can be challenging for healthcare companies. Still, the benefits outweigh the risks, so it’s worth considering digitally transforming an organization and improving its services. It’s not a bad idea to turn to third-party partners that could help to take a leap.

NordLayer streamlines network access controls to ensure only authorized users can access confidential data. Access to cloud resources happens using encrypted tunnels using AES 256-bit and ChaCha20 cyphers. The service is also compatible with major cloud platforms like Azure and AWS, allowing seamless integration with other solutions and services.

With correct control mechanisms, NordLayer is a valuable ally to follow through with the best cloud environment security practices. With an extensive set of centrally implemented features and monitoring controls that are all managed via the Control Panel, NordLayer allows the implementation of security policies reducing various risks.

Contact NordLayer and discuss your security options today to ensure safe access to patient data and protect your cloud infrastructure.

FAQ

How can healthcare organizations ensure compliance in the cloud?

Healthcare organizations can ensure compliance in the cloud by understanding applicable regulations. Familiarizing with regulations like HIPAA and GDPR will allow organizations to identify specific compliance requirements. This will serve as a basis for cloud provider choice and guide what access controls and other cybersecurity functionalities must be implemented to align with requirements.

What are examples of cloud security?

Cloud security is an umbrella term encompassing various technologies to protect data and systems in the cloud. This includes encryption, access controls, firewalls, intrusion detection and prevention systems, security information and event management, and data loss prevention.

How does the shared responsibility model work in healthcare cloud security?

The shared responsibility model defines the division of security responsibilities between the cloud service provider (CSP) and the healthcare organization. While the specifics entirely depend on the cloud service model, the cloud service provider usually takes care of the underlying cloud infrastructure. At the same time, the healthcare organization is responsible for application data security and access control.

What steps can healthcare organizations take to mitigate third-party risks?

To mitigate third-party risks, healthcare organizations must establish clear contractual agreements outlining security expectations, data handling procedures, breach notification requirements, and liability provisions. Then, a good plan is to perform ongoing maintenance with regular risk assessments. This should help organizations minimize risks associated with third parties.

Exploit mitigation improvements in Windows 8

Exploit mitigation improvements in Windows 8