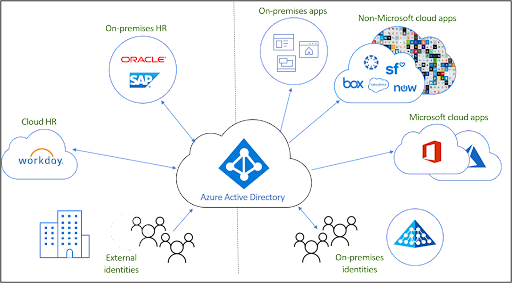

Identity is the new perimeter. Cyberattacks are becoming more advanced and cloud-focused. Identity providers (IdP) have responded by offering security controls that make it possible for small and medium-sized enterprises (SMEs) to be proactive and mitigate these threats. Many SMEs use Microsoft’s Azure Active Directory (AAD), which has prescribed best practices to secure identities. Microsoft reserves several features for its most premium subscriptions levels. IT administrators must determine which subscription tiers, or mixture of supplemental services from an open directory, are most appropriate for their unique security requirements.

This article outlines the fundamentals of securing identities in AAD with emphasis on understanding what options are available and tailoring security controls to your organization. Provisioning and identity and access management (IAM) is the starting point, followed by centralizing the identity management lifecycle, adding appropriate controls, and auditing.

Identity and Access Control

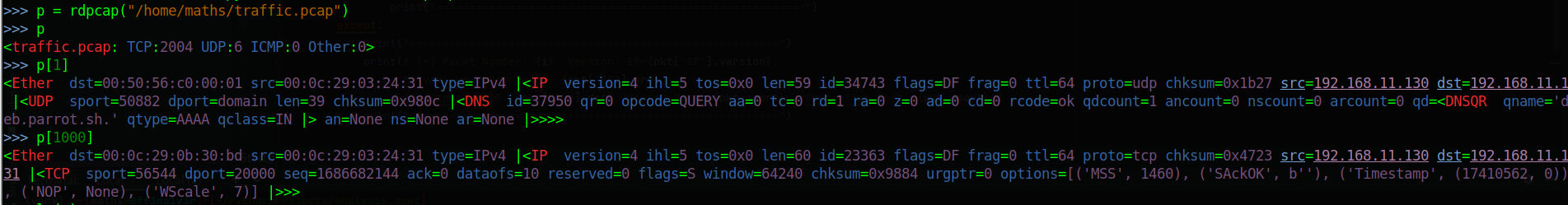

There are three main paths for provisioning in AAD:

- HR-driven onboarding.

- Federating identity from AAD to cloud apps.

- Inter-directory such as between the Active Directory Domain Services (AD DS) server role to access resources from your on-prem Active Directory domains.

Provision, Manage, and Deprovision Access

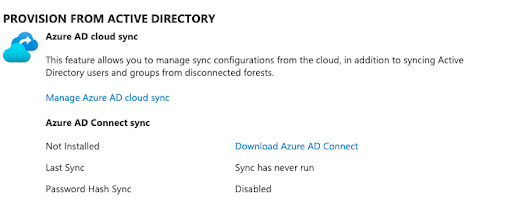

Most Microsoft shops have Active Directory (AD). A sync tool called Azure AD Connect syncs users with AAD. Microsoft also accepts non-Microsoft identities for access control, but additional costs may be assessed. Some organizations may have deployed Active Directory Federation Services (AD FS) prior to the advent of AAD.

There’s a significant potential for disruptions to system availability when identities are migrated from AD FS to AAD without deliberate planning. Avoid impulsive decision-making when you’re migrating users. Organizations that opt for a hybrid approach should harden Active Directory. This detailed guide offers recommendations about how AD should be managed and maintained for optimal security. Always limit administrative privileges in AD and avoid running day-to-day as a domain administrator.

Familiarize yourself with “join, move, and leave” planning processes and Microsoft’s concepts for identity governance. Automation is possible, but it’s designed for mid-size to large organizations. There’s no default auditing to avoid over-provisioning users or for when individuals leave. Due diligence is necessary to avoid security and compliance issues.

Critically Important AAD Best Practices

Verify that you’ve completed these steps before moving on.

Role-Based Access Control

AAD has built-in and custom user roles, and role-based access control (RBAC) is standard across all subscription tiers. This permits IT to follow the concept of least privilege and helps to establish a Zero Trust security approach, but it relies heavily on manual input and maintenance.

Ensure that you:

- Minimize the number of privileged accounts.

- Plan to manage, control, and monitor access.

- Limit global administrator accounts and make use of other roles such as billing administrator, global reader, helpdesk administrator, and license administrator.

- Limit global administrators and never sync high privilege accounts from AD.

- Pay careful attention to external collaboration settings and consider restricting external users from being able to invite guests to shared files; third-party storage; as well as review and adjust global sharing settings for SharePoint Online and OneDrive. These changes impact end users, but make it easier to recognize the “official” channels.

Using security groups for users assists with application security and lowers administrative overhead. Microsoft limits this capability to AAD Premium 1 (P1) and Premium 2 (P2) accounts. However, always try to avoid assigning resources directly to users and use identity protection. Please note that Microsoft has documented multiple limitations to syncing AD groups with ADD groups. For example, AD primary group memberships will not sync over to AAD.

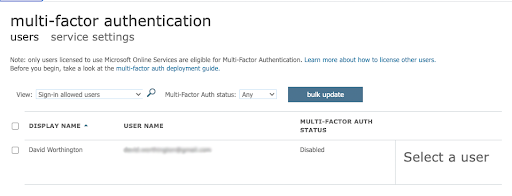

Multi-Factor Authentication

Multi-factor authentication (MFA) is vital for identity protection. AAD’s free tier only permits the use of the Microsoft Authenticator application. Admins have the option of only protecting the Azure AD Global Administrator versus all accounts, but it’s highly advisable to set up MFA for all users. Protect against MFA self-enrollment attacks by using a Temporary Access Pass (TAP) to secure the initial registration. Avoid mixing per-user MFA with Security Defaults and other settings.

Your budget may impact what’s possible. Microsoft assesses fees for all MFA verifications that happen with non-Microsoft identities and capabilities vary depending upon licensing levels.

Consider using additional context and “number matching” in Authenticator notifications to include the application name and geographic location in Push MFA prompts. This practice safeguards against “MFA bombing,” where attackers send repeated requests to exploit MFA fatigue. Attackers successfully hijacked Microsoft users’ sign-in sessions to bypass MFA at 10,000 organizations by using advanced phishing toolkits. Microsoft’s mitigation is to use certificate-based authentication and Fast ID Online (FIDO) v2.0 MFA implementations.

MFA through FIDO 2 devices and Windows Hello requires AAD P1 and P2. Additional hardware costs may apply. Some additional security controls include conditional access (CA).

Conditional Access

Microsoft recommends that all accounts deploy CA, but it’s also an extra cost and only available through P1, P2, or the E3 and E5 tiers for Microsoft 365 (M365) users. The standard M365 tier doesn’t include it. The overall licensing scheme is changing and can be bewildering.

There’s more than one CA implementation:

- P1 enforces MFA in certain scenarios

- P2 is risk based, learning user behavior to minimize MFA prompts

There are additional steps to consider for password management before we move on.

Configure Password Management

Microsoft has revised its password policy guidance to no longer expire passwords. It’s important to understand that SMEs that are regulated or don’t have MFA and CA configured shouldn’t do that. You may also consider changing passwords if you suspect an ID has been hijacked. CrowdStrike found that 71% of attacks are now malware-less and targeting cloud IDs. 75% of cloud breaches are due to compromised identities. A Zero Trust posture isn’t optional. Consider deploying Extended Detection and Response (XDR) from a vendor of your choosing or paying extra for Microsoft Identity Protection if you prefer the Microsoft stack.

Other best practices are:

- Set up self-service password reset (SSPR) with two authentication methods. Note that using security questions might be risky, because attackers gather intelligence on employees that’s “open source” from the web or obtain information from third-party breaches elsewhere. Microsoft charges extra for on-premises write-back.

- Use the same password policies everywhere (on-prem and cloud-based). Microsoft maintains extensive documentation on an agent-based approach to enforce AAD password protection on AD DS without exposing your domain controller to the web or forcing networking changes. Note that you have to be proficient in modifying AD settings.

Prepare for the Worst

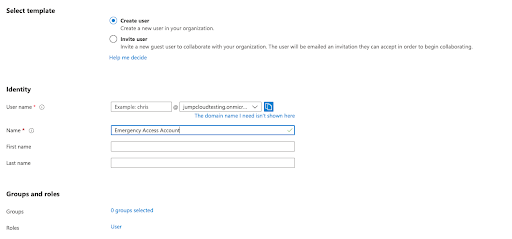

Create an emergency access Global Admin account for when it’s necessary to “break the glass” during network outages and periods of system downtime. This account is excluded from CA and MFA. Always store these credentials appropriately and use a highly complex password.

Following the steps outlined above provides a strong foundation with the appropriate entitlements, attributes, and processes to prepare AAD for application provisioning.

Manage Connected Applications

Application provisioning is on a per user basis by default with group assignment to applications being reserved for P1, P2, or equivalent AAD subscribers. Ensure that applications don’t provision high access through RBAC. There are multiple options, and automation is available for application provisioning. The initial provisioning cycle populates users, followed by programmatic incremental updates that handle updates made through Microsoft Graph or AD.

Microsoft provides several options for attribute mapping from identities that originate from the “three paths” mentioned above via SCIM endpoints to cloud resources or the Azure AD Provisioning agent. The latter must run on the same server as your SCIM application. Microsoft also has options for one-way connections from AAD to LDAP or SQL database user stores, but those have several on-premise prerequisites. Provisioning users into AD DS isn’t supported.

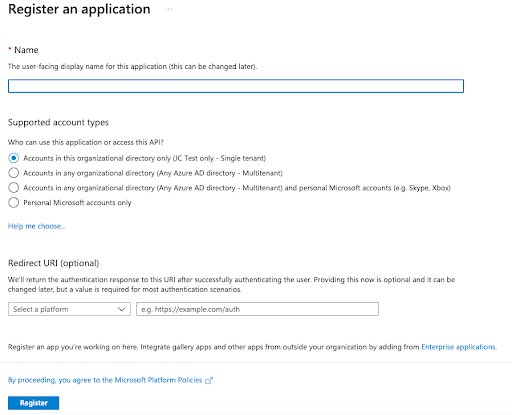

Siloed identities complicate existing identity practices and infrastructure as well as increase technical overhead and the attack surface area. Enable single sign-on (SSO) to centralize identity management either through AAD or a system or service that integrates with it.

Enable Single Sign-On

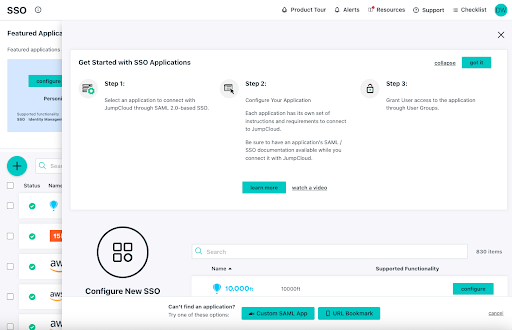

SSO will improve security through modern authentication protocols, make life easier for your users, and reduce management overhead. Microsoft has imposed restrictions on the number of SSO applications per user on its free tier, but that policy may be changing. AAD provides pre-built integrations through the Azure AD application gallery in addition to SAML and OAuth 2.0 SSO protocols for manual settings. Microsoft doesn’t support the AAA protocol RADIUS, which many network appliances use for access control, so its SSO doesn’t access all of your resources. Consider using cloud RADIUS or install and configure the Microsoft NPS server role.

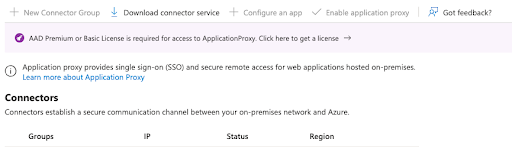

It’s possible for all AAD tiers to access native Windows apps via Kerberos, NTLM, LDAP, RDP, and SSH authentication in a hybrid deployment. However, identity protection features such as CA are limited to P1 and P2 products including Azure AD Application Proxy or secure hybrid partnerships integrations. These services will extend modern security to legacy apps.

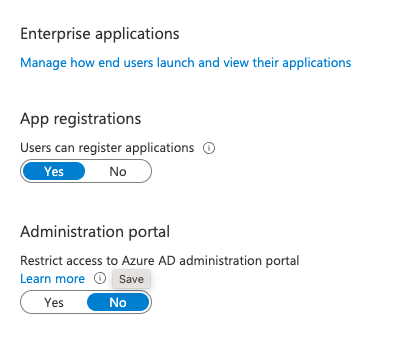

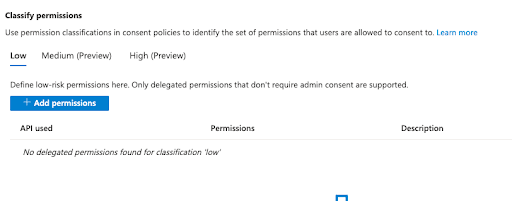

Phishing Considerations

Microsoft’s default settings permit all users to access the AAD admin portal and register custom SSO applets. Attackers are wise to this workflow and exploit OAuth in phishing exploits, which may bypass MFA. The principle of least privilege mandates that users who don’t need access shouldn’t receive it. Strongly consider restricting user-driven application consent and setting permissions classifications to “low impact.” This also applies to group owners. Compliance boundaries are murkier and should be carefully assessed outside of the Microsoft ecosystem.

AAD can be complex and Microsoft has amassed Azure partners for advanced specialization. Blocks of time with consultants should be a budgeting consideration for any AAD project. This writer, a former IT director, needed consultants even when projects appeared straightforward.

AAD is capable of alerting you to suspicious OAuth authorization requests, but that requires an additional subscription to Microsoft Cloud App security, either standalone or through M365 E5. Other solutions such as CrowdStrike Falcon Identity Protection have this capability. JumpCloud is a CrowdStrike partner and integrates with its solutions through the CrowdStrike Store.

Now that you’re familiar with configuring users, groups, and applications, let’s review reporting.

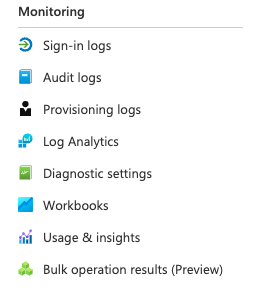

Audit Your Security Regularly

You should always look for ways to improve in-house security and processes. If you can’t stop it, you should at least monitor it. Regularly audit your entitlements, users, and review activity reports. Taking this extra step helps make security a process as opposed to relying solely on products and services.

Ideally, you’ll be monitoring all privilege changes, suspicious activity, and signs of known attacks. AAD will provide you with several reports:

- Basic security and usage reports are included among all subscription tiers

- Advanced reporting is restricted to P1 and P2

- SIEM reporting and Identity Protection require P2 (or equivalent) subscriptions

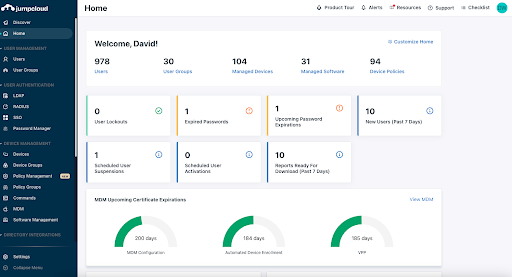

Some security capabilities may be more accessible and easier to deploy via JumpCloud, which integrates with AD, AAD/M365, Google Workspace, and Okta, or can function as a standalone directory. JumpCloud is focused on managing identities, in all places, as your security perimeter.

How JumpCloud Improves Upon Azure AD Best Practices

JumpCloud is an open directory platform that manages identities, access control, and devices. Devices are a method of granting access to an identity or application, so device management is included by default. That makes it possible to assemble high visibility telemetry data for reporting.

As previously noted, Microsoft requires its users to purchase additional subscriptions (Entra, M365 E3/5, AAD P1/2, and Intune for device management) to meet its recommendations for best practices. Standard AAD deployments fall short of Microsoft’s guidance, but some of its premium offerings may sell SMEs more features than they require or even want to purchase.

JumpCloud can help to fill in some of those gaps, and is easy to deploy, with deepening integrations for exporting AAD user groups. It’s designed for SMEs, so IT teams may benefit from having more control over what they’re buying (as opposed to not using what they pay for). The next section explores the specifics of how JumpCloud can improve AAD and help your organization to build the stack of its choosing out of best-of-breed apps and services.

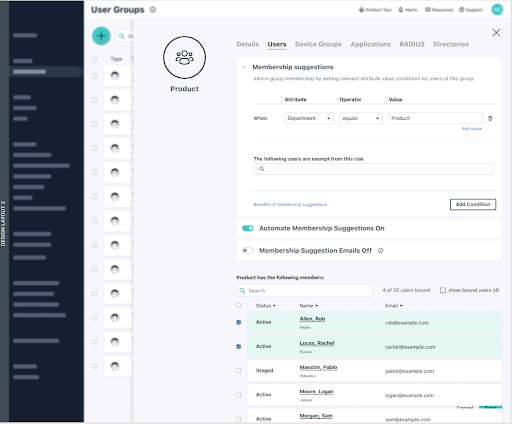

IAM and SSO

Identities flow into JumpCloud from other directories, HRIS systems, or JumpCloud’s Cloud LDAP. Attributes, such as where users are located, who their supervisor is, or what team they belong to, simplify provisioning user access to IT resources such as applications and networks.

Group management is provided at no additional cost and leverages attribute-based access control (ABAC), enabling the system to continuously audit entitlements for Zero Trust access control. JumpCloud is introducing the ability to automate and apply membership suggestions to groups. RBAC is more of a manual process, which can lead to mistakes that over or under provision users. Group members can access resources through SSO protocols and more:

- SAML

- OAuth 2.0

- OIDC

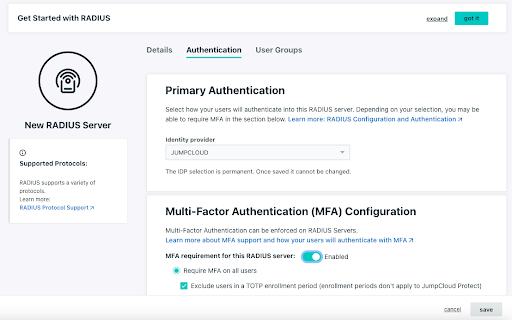

- RADIUS

- LDAP

JumpCloud provides delegated authentication that leverages AAD credentials and password policies for RADIUS. This capability extends Azure SSO to network resources such as Wi-Fi networks and VPNs while also reducing technical overhead and eliminating siloed identities. SSO applets launch from within the JumpCloud user console as a security control for phishing.

Environment-Wide MFA

JumpCloud Protect™, an integrated authenticator app for MFA, is designed to be frictionless. It provides application-based Push MFA and TOTP in addition to WebAuthn and U2F keys. More options for biometric authentication and passwordless log-in experiences are being added to the platform.

MFA can be configured for most SSO, LDAP, and RADIUS logins. It’s also integrated with CA.

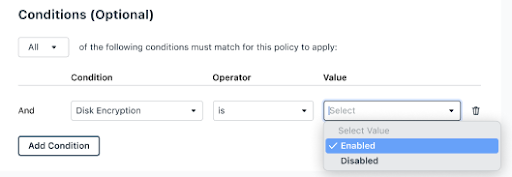

Conditional Access

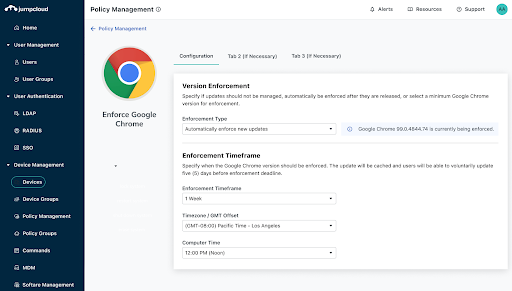

AAD identities can be protected by conditional access through JumpCloud as an add-on without purchasing P1 or P2 from Microsoft. Pre-built rules are available to enforce MFA for privileged user groups, restrict logins to specific locations, and to require device trust. Meaning, any identity + device that isn’t managed by JumpCloud won’t be able to access cloud apps. More granular conditions such as OS version and device encryption status are coming soon.

Password Management

A decentralized password manager and vault is available as an add-on through browser plug-ins and mobile apps to help SMEs implement complex passphrases for users. This feature assists with provisioning and revoking user access to reduce the risk of data breaches. Centralized password management also increases visibility for compliance peace of mind.

Device Management

JumpCloud is cross-OS, supporting:

- Android: Support for policies and application distribution is coming in late 2022 and beyond.

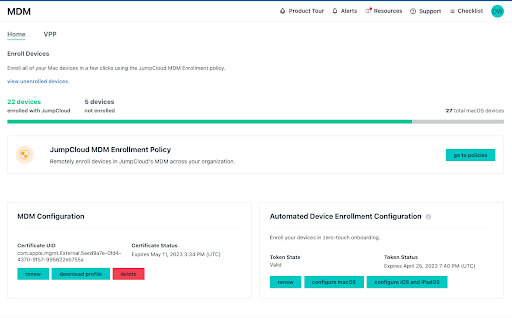

- Apple products: Mobile Device Management (MDM) is available for macOS and iOS devices, providing for application distribution, policies, and commands with the option for Zero Trust deployment. Policies are timely and in-touch with the needs of Mac admins, including addressing “Day 0” OS upgrade controls.

- Linux: JumpCloud supports multiple Linux distros with multiple deployment options. It provides pre-built policies, including full disk encryption (FDE), and Sudo access for commands (with pre-built security commands through the Admin Console). IAM capabilities aren’t restricted to certain browsers; Microsoft mandates Edge for Intune device enrollment. Intune is an additional subscription beyond standalone AAD.

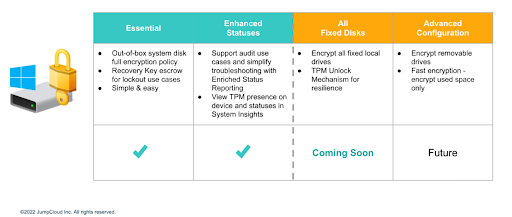

- Windows: Anything an admin wishes to do is possible through security commands and a PowerShell module. Commands function through a queue. JumpCloud providespre-built GPO-like policies including fine-grained control over BitLocker, as well as a GUI for custom policies. There’s also software distribution, and more, with Windows Out of Box Experience (OOBE) coming soon to streamline onboarding remote workers.

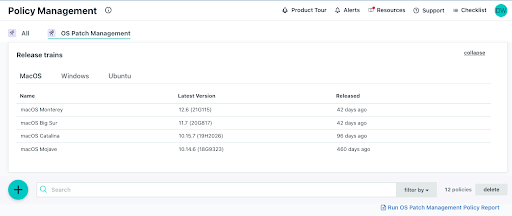

Patch Management

JumpCloud offers cross-OS patching as an add-on. Patching is an important activity to mitigate the risk of security breaches that leverage 0-Day attacks with a healthy device state. Centralizing patch management helps to reduce costs versus purchasing a third-party patch management solution for Windows and all other operating systems. Browser patch management is arriving in Q4, 2022, and it will extend to reporting for management status.

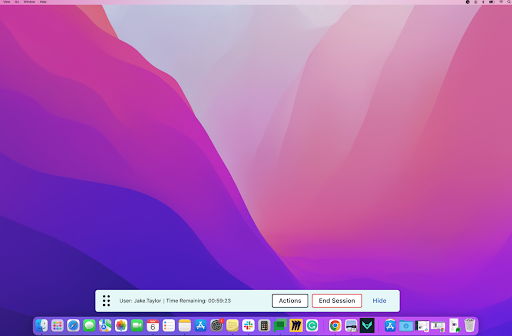

Remote Assist

IT teams can extend opt-in remote support to users with Remote Assist. It’s free and works cross-OS. The only configuration that’s required is to have JumpCloud agents running on a device that’s bound to an identity from the open directory. It’s possible to:

- Copy and paste between devices

- Work in multi-monitor systems

- Turn on audit logging

Reporting

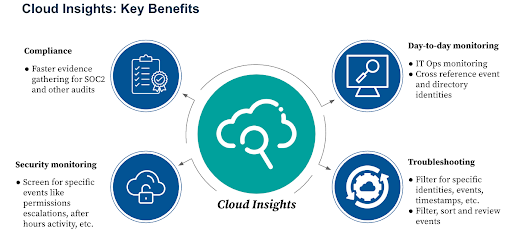

JumpCloud’s emphasis on making identity the new perimeter is reflected in the telemetry that’s available from built-in reporting tools including Device Insights and Directory Insights. There’s a growing selection of pre-made reports, stored for analysis. SIEM integration is also possible.

Some of those include:

- User to Devices

- User to RADIUS Server

- User to LDAP

- User to Directories

- User to SSO Applications

- OS Patch Management Policy

Cloud Insights is an add-on to monitor Amazon Web Services (AWS) events and user actions. This makes compliance and data forensics easier for SMEs and helps to enforce least privilege in cloud infrastructure. Support for Google Cloud (GCP) will be introduced next for a multi-cloud strategy.

Avoid Vendor Lock-In and Do More with JumpCloud

JumpCloud is available to try with full functionality for 10 users and devices, and with 10 days of complementary chat support before charges are accessed. AAD users benefit from more freedom of choice, simpler deployment workflows, access to more sources, and lower costs.

Sometimes self-service doesn’t get you everything you need. If that’s how you’re feeling, schedule a 30-minute consultation to discuss options for implementation assistance, migration services, custom scripting, and more.

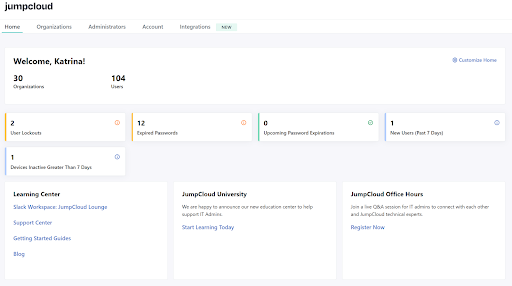

Similarly, managed service providers (MSPs) receive 10 free user accounts within the first organization that they create in the multi-tenant portal, JumpCloud’s dedicated MSP solution.

About Version 2 Digital

Version 2 Digital is one of the most dynamic IT companies in Asia. The company distributes a wide range of IT products across various areas including cyber security, cloud, data protection, end points, infrastructures, system monitoring, storage, networking, business productivity and communication products.

Through an extensive network of channels, point of sales, resellers, and partnership companies, Version 2 offers quality products and services which are highly acclaimed in the market. Its customers cover a wide spectrum which include Global 1000 enterprises, regional listed companies, different vertical industries, public utilities, Government, a vast number of successful SMEs, and consumers in various Asian cities.

About JumpCloud

At JumpCloud, our mission is to build a world-class cloud directory. Not just the evolution of Active Directory to the cloud, but a reinvention of how modern IT teams get work done. The JumpCloud Directory Platform is a directory for your users, their IT resources, your fleet of devices, and the secure connections between them with full control, security, and visibility.