Intro

Alrighty! This one has long been on my to-do list! OpSec! Why, how, when, who… how much and how long… Let’s see! Let’s answer some questions. Whether you’re sleuthing for some OSINT investigation, or your country is blocking you from watching your favorite shows on Netflix, you need to figure out your specific OpSec needs, and behave accordingly.

This one is a particularly interesting topic, as it combines a lot of elements – from purely technical to some psychological/behavioural ones.

Before I go further into the topic, I would emphasize that this is my opinion. You can always argue the technicalities, sure, but the methodology is unique – as it should be – again, in my opinion. (Though in this article I am not discussing that many technical aspects)

This is quite logical, as we all have different needs, and are encapsulated within different contexts. What’s true in my case might not necessarily be true in yours. So, with that in mind one might ask – what’s there to learn, then? And it is a valid question! However, we’re interested in the approach here – which is also unique to each and every one of us.

My goal here is to help you be more involved in taking the control of your own privacy and having a healthier and safer online presence which surely accounts to a large portion of your life in general, as we’re all getting more and more dependent on myriad of online services.

Now that I’ve made the intro, and safely fenced off from the personal aspect involved in this topic – let us proceed.

Basics

At its core, OpSec is just managing risk. In the personal case (one I’m looking at), the risk comes from whatever you deem worthy of incorporating into your model. Thus, you’d also want to have a threat model – no need to go overboard here though. This is just meant in a context of you identifying what information you’d want to protect at all costs i.e., no leaks, or if there’s some that you might be fine with being discovered. I purposefully lead with the first sentence above, as you will probably encounter many definitions – but the bottom line is always about that I/O of your personal information. You want to control both I and O in the I/O, but you definitely have to focus on the O – in other words, your model and the way you conduct yourself should be in sync with what you’re trying to achieve. Again, based on the model.

You want to prioritize as well. Best practices are best practices for a reason but will deteriorate rapidly if applied blindly (without context to match). I debated with myself whether to include best practices at the end of this article and opted for it in the end. The argument against it was backed by the second sentence of this paragraph. I am counting on you here!

Traditionally, there are stages when it comes to OpSec (well, for 3 letter agencies and Military it really makes sense) and I will mention that here as well. However, I want to emphasize that I am doing that for the contextual and historical reasons, as well as for you to be more informed. In the end, though, you’re not required to draft any complex models based on the ones Military uses. Their threat model is surely different than yours, so their OpSec needs are too. The methodology/approach is what you should focus on. Thus, before taking any action first, take a pause and contemplate on it. The future you will be better off i.e., safer online.

Defining OpSec

Okay! I am now going to reference some definitions so you can see how that compares to what I’ve been trying to convey.

1 – (Published by Range Commanders Council, U.S. Army White Sands Missile Range – full .pdf document found here)

The OPSEC is a process of identifying, analyzing, and controlling critical information indicating friendly actions attendant to military tactics, techniques, and procedures (TTPs), capabilities, operations, and other activities to:

- Identify actions that can be observed by adversarial intelligence systems.

- Determine what indicators adversarial intelligence systems might obtain that could be interpreted or combined to derive critical information in time to be useful to adversaries.

- Select and execute measures that eliminate or reduce to an acceptable level the vulnerabilities of friendly actions to adversary exploitation.

As you can see, this is very applicable for us as well! TTPs of the well-known ATPs are already documented by MITRE (since I am talking about the Cyber domain), and aside from the specific stuff regarding military operations, this definition seems quite solid!

To digress for a bit:

Unless you’re a CEO of large Enterprise that’s targeted by advanced threat actors, you might care more about the TTPs. In an average guy wants to prioritize his privacy online scenario those ‘TTPs’ are quite different in nature. However, the goal is the same – a script kiddie trying to mess something up for the fun of it might not invite ATP levels of controls, yet they can still devastate you. Take it all into account and assess critically. Start with the basics, and build from there, don’t go overboard just to get pwned by a newbie hacker that bought a phishing kit on the Darkweb.

Circling back to the definitions, let’s do one more:

2 – (Published by the Department of the Navy, US Marine Corps – the .pdf can be found here)

OPSEC is a capability that identifies and controls critical information and indicators of friendly force actions attendant to military operations, and incorporates countermeasures to reduce the risk of an adversary exploiting vulnerabilities. When effectively employed, it denies or mitigates an adversary’s ability to compromise or interrupt a mission, operation, or activity. Without a coordinated effort to maintain the essential secrecy of plans and operations, our enemies can forecast, frustrate, or defeat major military operations. Well-executed OPSEC helps to blind our enemies, forcing them to make decisions with insufficient information.

This is it …a capability that identifies and controls critical information… and incorporates countermeasures to reduce the risk of an adversary exploiting vulnerabilities…

As you can see the proactive nature is what lies at the heart of OpSec. You taking into account stuff that could compromise your vulnerabilities and drafting a plan to mitigate that before it occurs. There is no reactive OpSec! At that point, you’ve already been pwned, and depending on the criticality of the breach you’re in some (deep) trouble. This also means that one misstep in your (not so) robust model is enough to take it all down. And it makes sense – a hacker just needs to find one way in and they’re off to the races!

Stages of OpSec – OpSec process

Using our US Navy document, we found this neat little graphical representation of OpSec stages

From 1. to 5. you’d have:

- Identification of critical information

- Analysis of threats

- Analysis of vulnerabilities

- Assessing the risk

- Applying countermeasures

This is also what you’d get if you Googled for “OpSec stages.”

However, it might be hard(er) for you to analyse threats – as you’re just a civilian, so you’re not really the target, but you still can end up being a target. Just some more food for thought.

(Also, note that the process is circular)

Anonymity vs Privacy

This is the hot stuff right here! VPNs are there for your privacy not anonymity. That’s what TOR is all about. You need to understand this distinction.

The idea is that privacy is you keeping some things for yourself, and this can include your actions. On the flipside, anonymity is supposed to keep your identity private, but not your actions.

This is a very, very, brief overview, but it’s a start. Check out this blog for a bit more information on these two terms. Or this one.

Good (best?) practices

It’s a bit unrewarding to say best practices which is why I purposefully added good to the title. Let’s review some:

- Don’t talk openly (about your ‘mission’ critical stuff) – Duh!

- Don’t operate from home – If you intend on doing anything that needs to keep that level of separation from your real persona. If you’re just trying to do normal stuff where that’s a non-issue, then you can adjust accordingly. (I am not trying to help you operate a botnet, also, those guys already know this stuff.)

- Encrypt everything – this is a great one, though, again, it might not be necessary in your case. You might want to encrypt and safely store only the most critical data. Also, this does require a fair bit of technical knowledge.

- Create personas – This is the anonymity part. If you really need to do something but it is not a great idea to do as ‘you,’ create another persona and be them. Great example of this are sock puppets, or something like OSINT CTFs I like to participate in.

- Don’t contaminate – This one ties to the previous one. If you have personas, don’t cross-contaminate them, as in this case they are actually working against you, and you’d be better off just using other controls to protect the real you. It can backfire. Significantly.

- Don’t trust – I mean… you’re on the Internet after all, adopt a healthy amount of paranoia if you haven’t already.

- Be paranoid – See the previous one. Even if nobody’s out to get you personally, that doesn’t mean that they’re not out to get you. That’s just the Internet for you.

- Don’t give people power over you – Just don’t. Don’t overshare, be careful. Anything you say can and will be used against you is very, very, true in this context as well.

Technical good (best?) practices

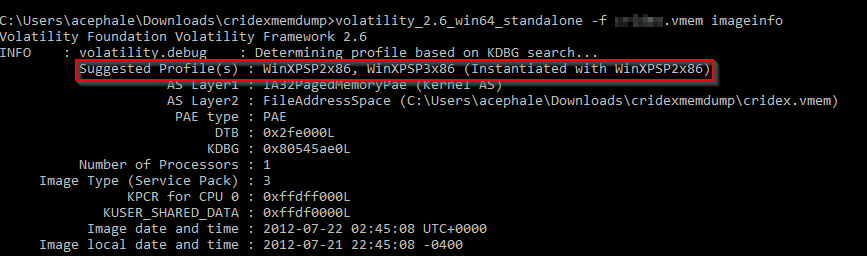

- Don’t be the only guy using TOR on a network that’s monitored. It’s how they caught this guy.

- Don’t do stuff on your own infrastructure that might get you in trouble

- VPN provides privacy! Not anonymity

- Isolate/segment your environment if there’s a need – I’m thinking VMWare, VirtualBox, etc.

- Check for DNS leaks

- If your use case is such, use Tails/Qubes OS/Whoonix

- Strong passwords! (And a password manager – I prefer KeePass, as it keeps the stuff locally and you can add more functionality through plugins, such as 2FA)

- Encrypt your disk, encrypt important stuff – where needed

- Protect yourself against WebRTC leaks

- Educate yourself on browser fingerprinting – there’s a lot of stuff online (as well as right here on Vsociety!) There’s this article, too.

Conclusion

I am hoping I’ve piqued your interest with all this OpSec talk. It might seem like an overkill to someone who’s maybe coming from a different field, but I assure you it is not! The Internet is a hostile place, and in a hostile environment you’d act accordingly, right? If you’re on vacation in, let’s say, a gorgeous but realistically dangerous place like some cities in Latin America, you would be careful. Do the same when online. Know where you’re threading, and act accordingly.

This is all for now! I hope I’ve made a decent introduction for some of the upcoming articles that will focus on the Darkweb, all the while bringing this fascinating topic closer to you. Stay tuned!

Some additional cool stuff to check out

https://www.youtube.com/watch?v=zXmZnU2GdVk

https://www.youtube.com/watch?v=8u7yyFYvzC4

https://www.youtube.com/watch?v=9XaYdCdwiWU

https://www.youtube.com/watch?v=eQ2OZKitRwc

Cover image by ueberform

#opsec #privacy #anonymity #best-practices #vicarius_blog

About Version 2 Digital

Version 2 Digital is one of the most dynamic IT companies in Asia. The company distributes a wide range of IT products across various areas including cyber security, cloud, data protection, end points, infrastructures, system monitoring, storage, networking, business productivity and communication products.

Through an extensive network of channels, point of sales, resellers, and partnership companies, Version 2 offers quality products and services which are highly acclaimed in the market. Its customers cover a wide spectrum which include Global 1000 enterprises, regional listed companies, different vertical industries, public utilities, Government, a vast number of successful SMEs, and consumers in various Asian cities.

About VRX

VRX is a consolidated vulnerability management platform that protects assets in real time. Its rich, integrated features efficiently pinpoint and remediate the largest risks to your cyber infrastructure. Resolve the most pressing threats with efficient automation features and precise contextual analysis.