Editor’s Note: This article was originally published on the Fintech Open Source Foundation (FINOS) blog and is reprinted here with permission.

Financial organizations increasingly rely on open source software as a foundational component of their mission-critical infrastructure. In this blog, we explore the top open source trends and technologies used within the FinTech space from our last State of Open Source Report — with insights on the unique pain points these companies experience when working with OSS.

About the State of Open Source Survey

OpenLogic by Perforce conducts an annual survey of open source users, specifically focused on open source usage within IT infrastructure. In 2024, we teamed up with the Open Source Initiative for the third year in a row, and brought on a new partner: the Eclipse Foundation, who helped us expand our reach and get more responses than ever before.

For those looking for the non-segmented results from the entire survey population (not just respondents working in the financial sector), you can find them published in our 2024 State of Open Source Report here.

Demographics and Firmographics

For the purposes of this blog, we segmented the results to focus on the Banking, Insurance, and Financial Services verticals. This segment, comprising 250 responses, represented 12.22% of our overall survey population. Before we dive into some of the key results of the survey, let’s look at demographic and firmographic datapoints that will help us to frame the results.

Among respondents representing the Banking, Insurance, and Financial Services verticals, most of their companies were headquartered in North America (32% of responses), with Africa, Asia, and Europe as the next most popular locations at 18.8%, 17.6% and 16%, respectively.

The top 3 roles for respondents were System Administrators (32%) Developers / Engineers (18.8%) and Managers / Directors (16.4%). Within this segment, we also saw strong large enterprise representation with 38.4% of respondents stating they work at companies with over 5000 employees.

Open Source Adoption

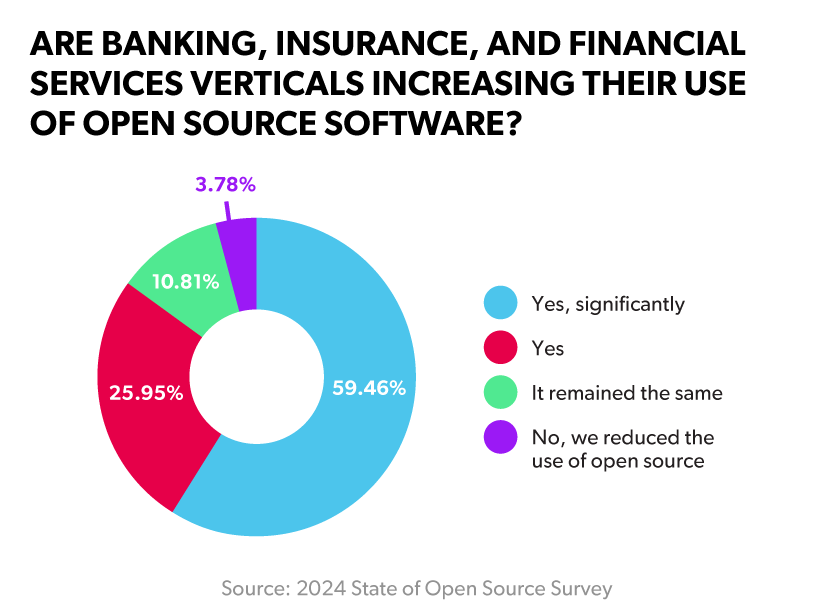

Our survey data painted a clear picture, with a combined 85.4% of respondents from these industries increasing their use of open source software. 59.4% said they’re increasing their use of open source significantly. This rate of open source adoption within a heavily regulated set of verticals shows how many companies are confidently deploying open source for their mission-critical applications.

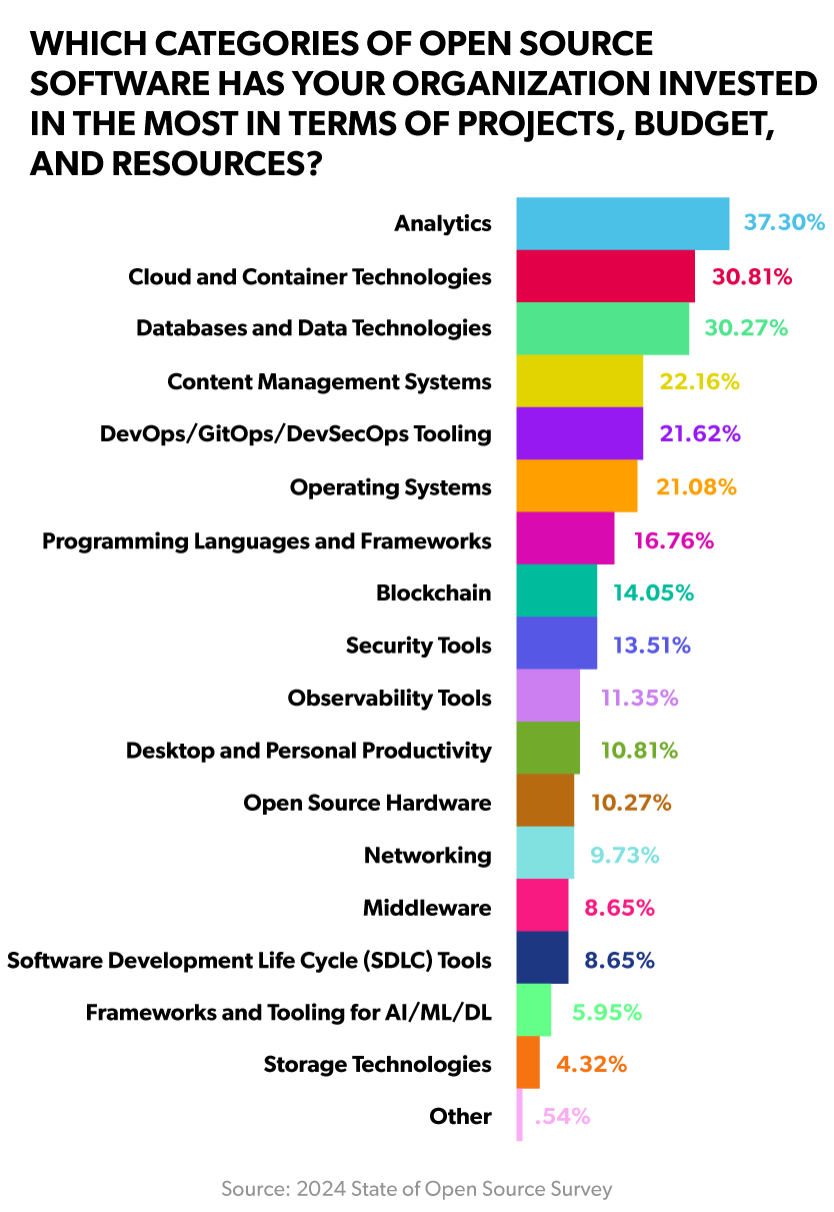

Looking more granularly at areas of open source investment, we saw 37.3% from this segment investing in analytics, 30.8% investing in cloud and container technologies, and 30.3% investing in databases and data technologies.

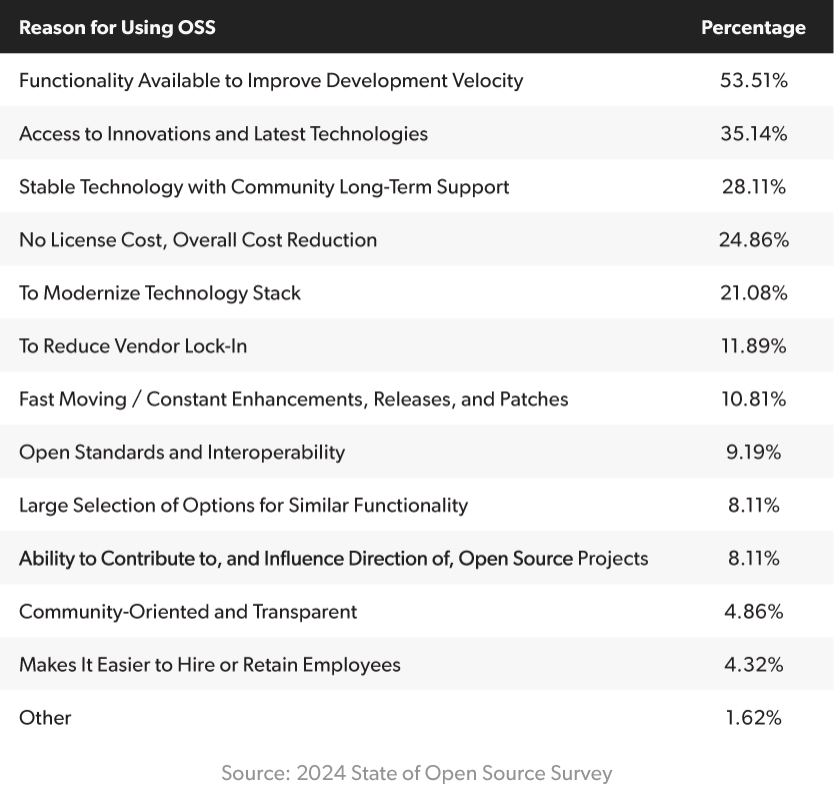

When asked for the reasons for adopting open source technology, our respondents identified improving development velocity (53.51%), accessing innovation (35.14%), and the overall stability (28.11%) of these technologies as the top drivers. Cost reduction and modernization rounded out the top 5, at 24.86% and 21.08% of responses within the segment, respectively.

Top Challenges When Using Open Source Software

When we asked teams to share the biggest issues they face as they work with open source software, some key themes emerged. Companies within this segment identified maintaining security policies and compliance (56%), keeping up with updates and patches (49.09%), and not enough personnel (49.05%) as the most challenging.

Later in the survey, we asked specifically about how organizations are addressing open source software skill shortages within their organizations. The top tactics selected by our respondents were hiring experienced professionals (48.18%), hiring external consultants/contractors (44.53%), and providing internal or external training (40.88%).

Infrastructure scalability and performance issues (67.98%), and lack of a clear community release support process (59.75%) represented the least challenging areas for respondents within this segment.

Top Open Source Technologies

The State of Open Source Report has sections dedicated to technology categories (i.e. programming languages, databases) to assess which projects have gained adopters and are going strong vs. those that may be declining in popularity. As a reminder, the following results are specific to the Banking, Insurance, and Financial Services verticals.

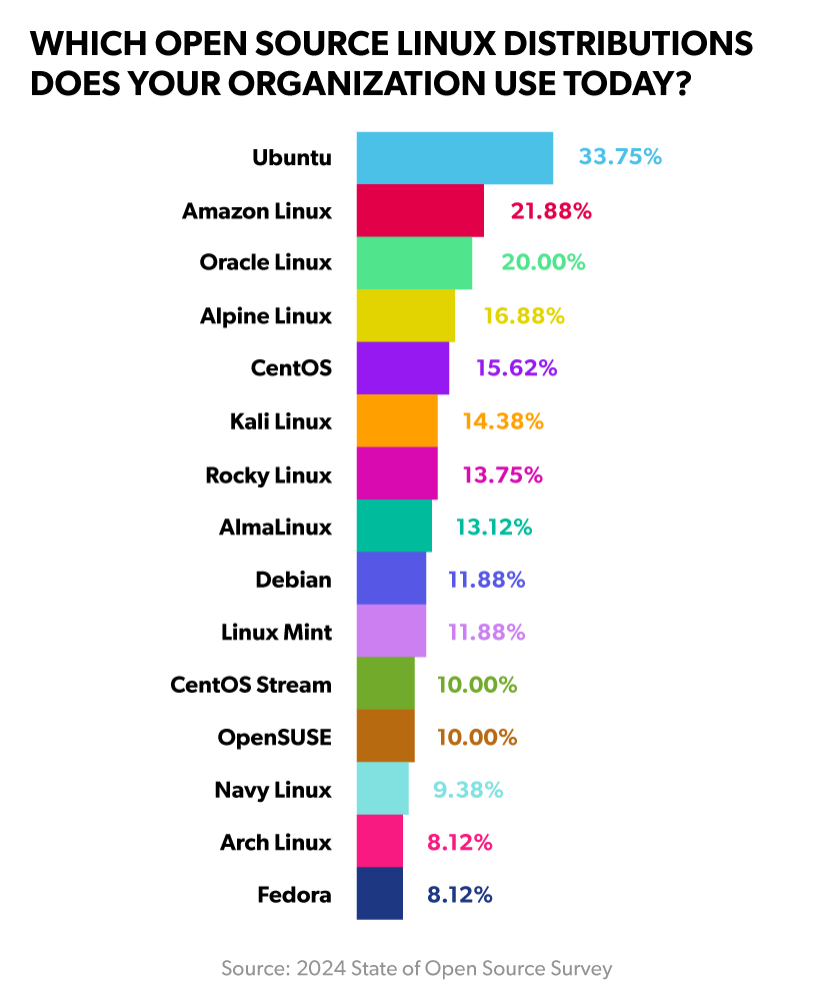

When looking at Linux distributions, the top five selections were:

- Ubuntu (33.75%)

- Amazon Linux (21.88%)

- Oracle Linux (20.00%)

- Alpine Linux (16.88%)

- CentOS (15.62%)

Here’s the full breakdown:

Get Expert Enterprise Linux Support

OpenLogic supports top community and commercial Linux distributions including AlmaLinux, Rocky Linux, Oracle Linux, Debian and Ubuntu. We also offer long-term support for CentOS.

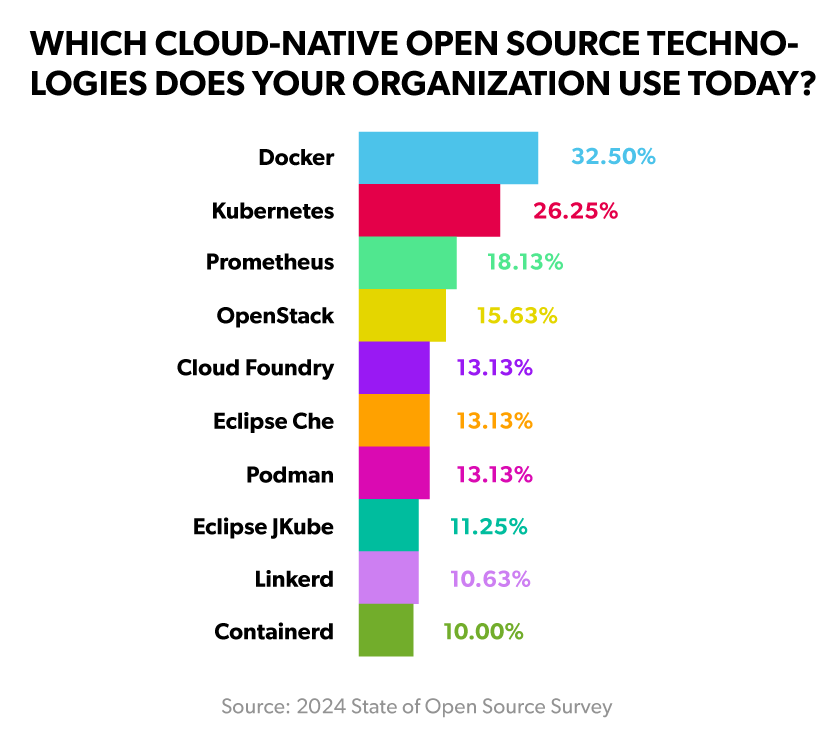

Looking at cloud-native technologies, the top five selections were:

- Docker (32.50%)

- Kubernetes (26.25%)

- Prometheus (18.13%)

- OpenStack (15.63%)

- Cloud Foundry (13.12%)

This chart shows the top 10:

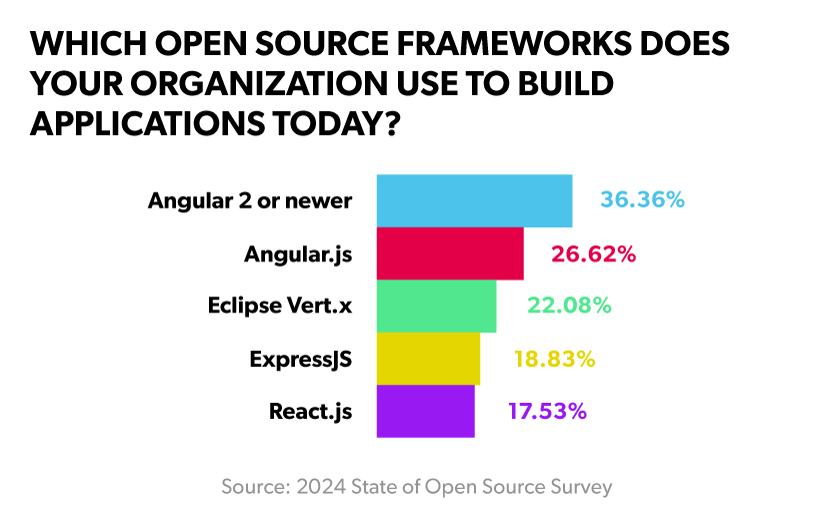

For open source frameworks, we did notice a surprising amount (26.62%) of respondents reporting usage of Angular.js (which has been end of life since 2021).

For those who indicated using Angular.js, we asked a follow up question regarding how they plan on addressing new vulnerabilities. 30.77% expressed that they won’t patch the CVEs, 26.92% noted that they have a vendor that provides patches, and 19.23% said that they will look for a long-term support vendor to help when it comes time.

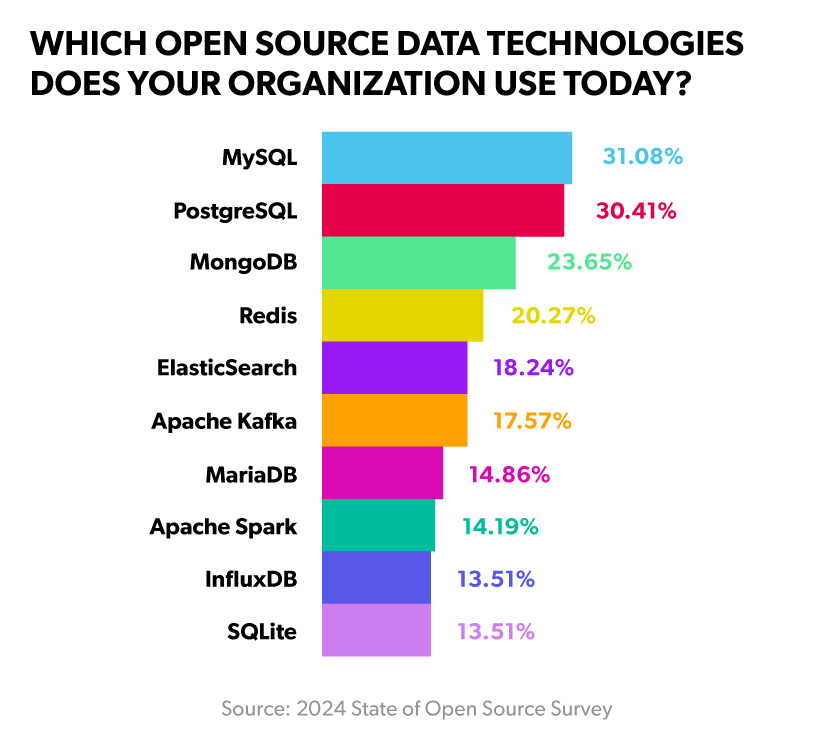

In terms of open source data technology usage, we saw MySQL (31.08%) and PostgreSQL (30.41%) at the top of the list, with MongoDB (23.65%), Redis (20.27%), and Elasticsearch (18.24%) rounding out the top 5.

In the full report, we also look at the top programming languages/runtimes, infrastructure automation and configuration technologies, DevOps tools, and more. You can access the full report here.

Open Source Maturity and Stewardship

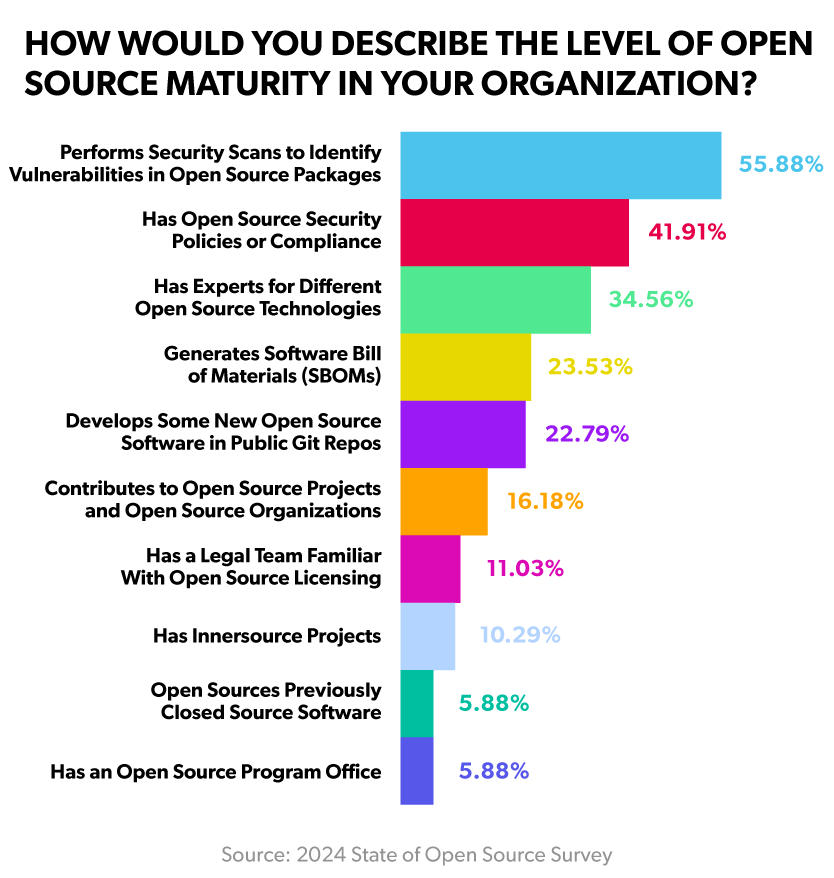

At the end of the survey, we asked respondents to share information about the overall open source maturity of their organizations. 55.88% noted that they perform security scans to identify vulnerabilities within their open source packages, 41.91% noted that they have established open source compliance or security policies, and 34.56% have experts for the different open source technologies they use.

Another marker for organizational open source maturity is the sponsorship of nonprofit open source projects. The most supported organizations among Banking, Insurance, and Financial Services verticals were the Apache Software Foundation (27.94%), the Open Source Initiative (22.06%), and the Eclipse Foundation (19.85%). It’s also worth noting that 19.85% of respondents didn’t know of any official sponsorship of these projects within their organization. Overall, 89.41% noted that they sponsored at least one open source nonprofit organization.

Banking on Open Source: Finding Success With OSS in the Finance Sector

In this on-demand webinar, hear about how banks, Fintech, and financial services providers can meet security and compliance requirements while deploying open source software.

Final Thoughts

In this blog, we looked at segmented data from our 2024 State of Open Source Report specific to the Banking, Insurance, and Financial Services verticals. Considering these industries are heavily regulated, with most required to meet compliance requirements with their IT infrastructure, it was encouraging to see over 85% increasing their usage of open source software.

Not surprisingly, maintaining security policies and compliance was a top challenge for this segment. Given the current pace of open source adoption within this space, we expect this to continue to be a pain point. It’s up to organizations to manage the complexity that comes with juggling so many open source packages, and ultimately ensure that they have the technical expertise on hand to support that software — especially when it’s used in mission-critical IT infrastructure.

About Perforce

The best run DevOps teams in the world choose Perforce. Perforce products are purpose-built to develop, build and maintain high-stakes applications. Companies can finally manage complexity, achieve speed without compromise, improve security and compliance, and run their DevOps toolchains with full integrity. With a global footprint spanning more than 80 countries and including over 75% of the Fortune 100, Perforce is trusted by the world’s leading brands to deliver solutions to even the toughest challenges. Accelerate technology delivery, with no shortcuts.

About Version 2 Digital

Version 2 Digital is one of the most dynamic IT companies in Asia. The company distributes a wide range of IT products across various areas including cyber security, cloud, data protection, end points, infrastructures, system monitoring, storage, networking, business productivity and communication products.

Through an extensive network of channels, point of sales, resellers, and partnership companies, Version 2 offers quality products and services which are highly acclaimed in the market. Its customers cover a wide spectrum which include Global 1000 enterprises, regional listed companies, different vertical industries, public utilities, Government, a vast number of successful SMEs, and consumers in various Asian cities.

We are delighted to announce our acquisition of Delphix, a best-in-class leader in Enterprise Data Management solutions. I want to share with you why I am personally excited about this major milestone in our company’s continued DevOps evolution and the benefits this acquisition provides to our customers.

Data is at the heart of how enterprises operate today and essential for successful software development, but accessing and managing that data is extremely challenging. Many teams do not have rapid access to solid, high-quality test data. Imagine something the size of a relational database, with all the data to collect and piece together to make it testable — this is both labor-intensive and very difficult to achieve.

All that changes with Delphix. This truly outstanding platform provides on-demand, easy access to data very quickly and in a safe way. Delphix protects and masks customer data giving teams the right data, securely and quickly, so they can focus on creating great software.

We are delighted to announce our acquisition of Delphix, a best-in-class leader in Enterprise Data Management solutions. I want to share with you why I am personally excited about this major milestone in our company’s continued DevOps evolution and the benefits this acquisition provides to our customers.

Data is at the heart of how enterprises operate today and essential for successful software development, but accessing and managing that data is extremely challenging. Many teams do not have rapid access to solid, high-quality test data. Imagine something the size of a relational database, with all the data to collect and piece together to make it testable — this is both labor-intensive and very difficult to achieve.

All that changes with Delphix. This truly outstanding platform provides on-demand, easy access to data very quickly and in a safe way. Delphix protects and masks customer data giving teams the right data, securely and quickly, so they can focus on creating great software.